This is the multi-page printable view of this section. Click here to print.

Advanced

- 1: Projects page

- 2: Organization

- 3: Search

- 4: Shape mode (advanced)

- 5: Single Shape

- 6: CVAT User roles

- 7: Track mode (advanced)

- 8: 3D Object annotation (advanced)

- 9: Attribute annotation mode (advanced)

- 10: Annotation with rectangles

- 11: Annotation with polygons

- 11.1: Manual drawing

- 11.2: Drawing using automatic borders

- 11.3: Edit polygon

- 11.4: Track mode with polygons

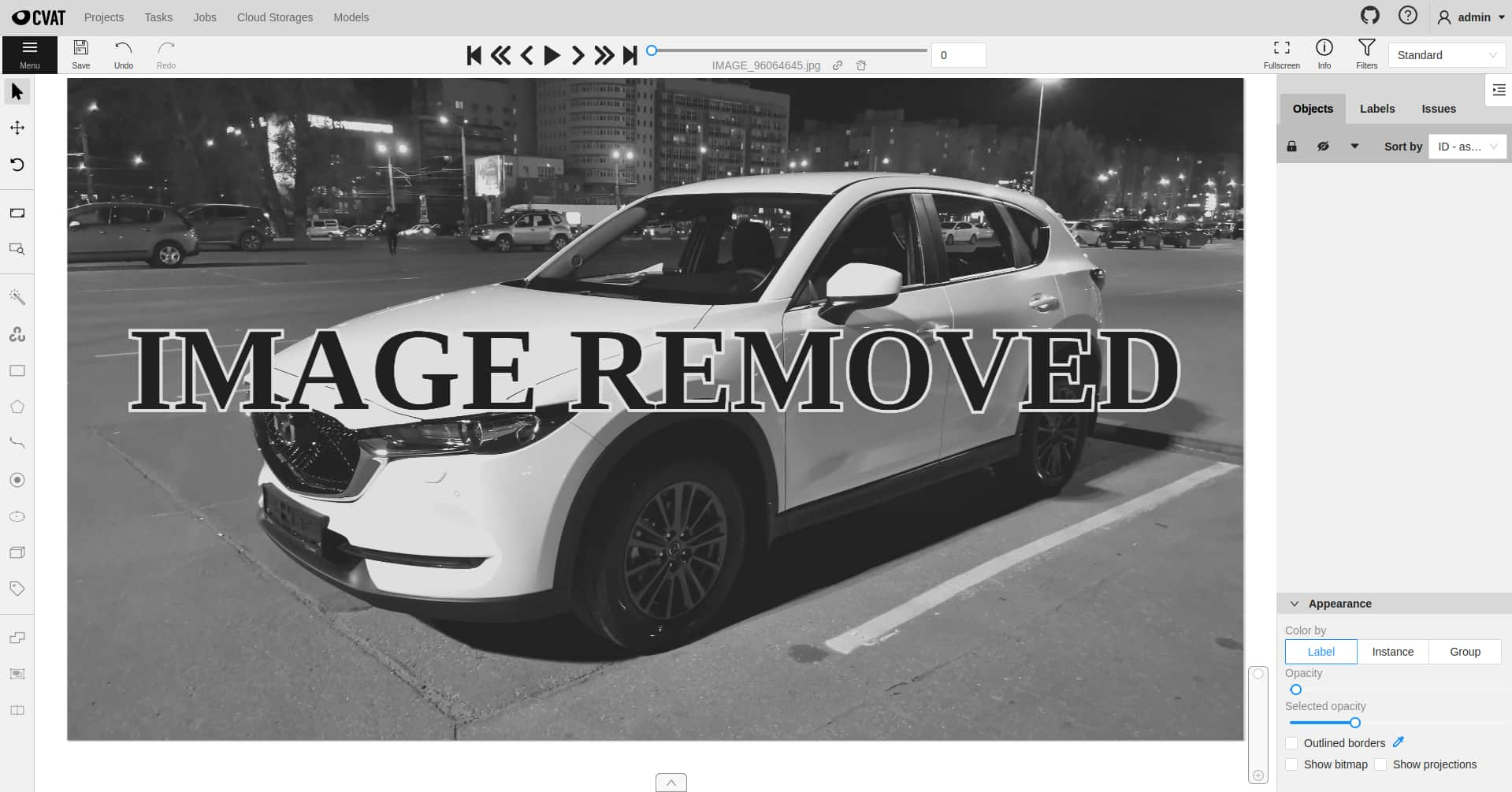

- 11.5: Creating masks

- 12: Annotation with polylines

- 13: Annotation with points

- 14: Annotation with ellipses

- 15: Annotation with cuboids

- 15.1: Creating the cuboid

- 15.2: Editing the cuboid

- 16: Annotation with skeletons

- 17: Annotation with brush tool

- 18: Annotation with tags

- 19: Models

- 20: CVAT Analytics and QA in Cloud

- 20.1: Automated QA, Review & Honeypots

- 20.2: Manual QA and Review

- 20.3: CVAT Team Performance & Monitoring

- 21: OpenCV and AI Tools

- 22: Automatic annotation

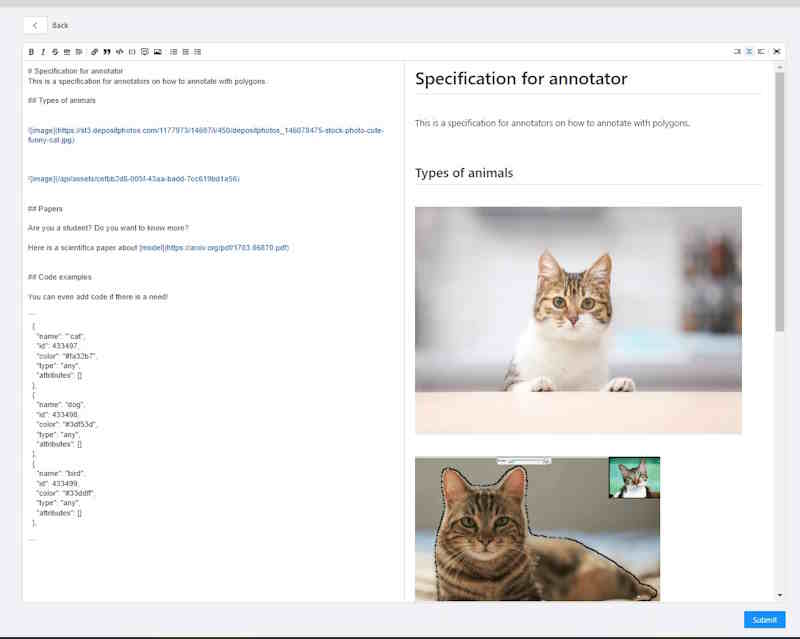

- 23: Specification for annotators

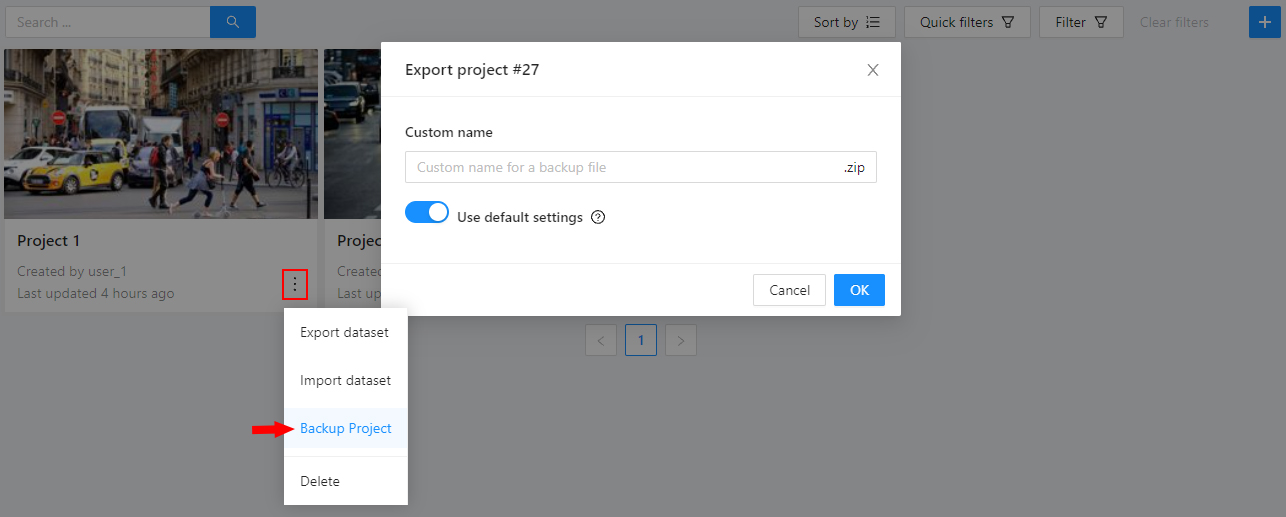

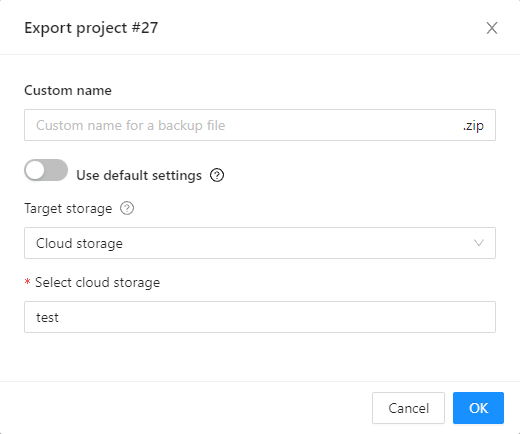

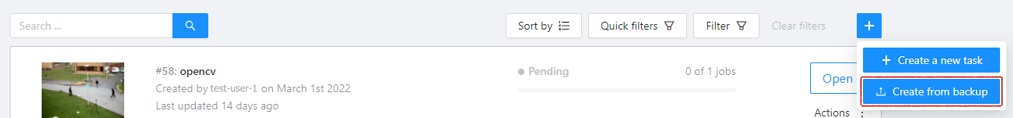

- 24: Backup Task and Project

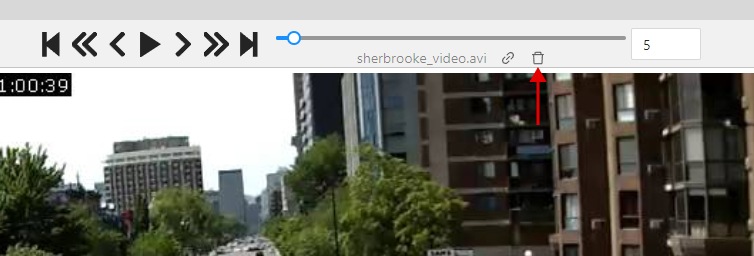

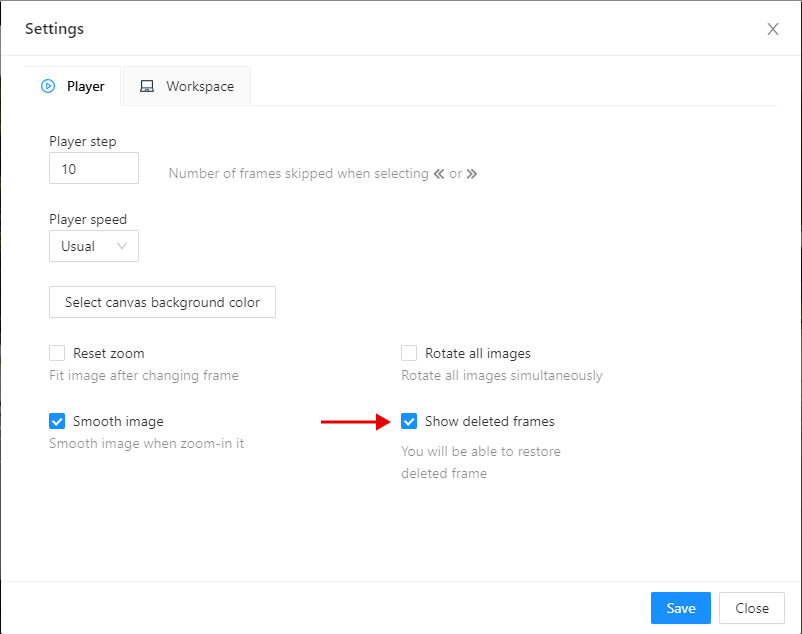

- 25: Frame deleting

- 26: Join and slice tools

- 27: Import datasets and upload annotation

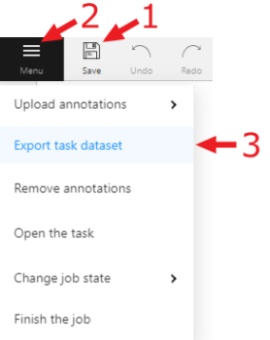

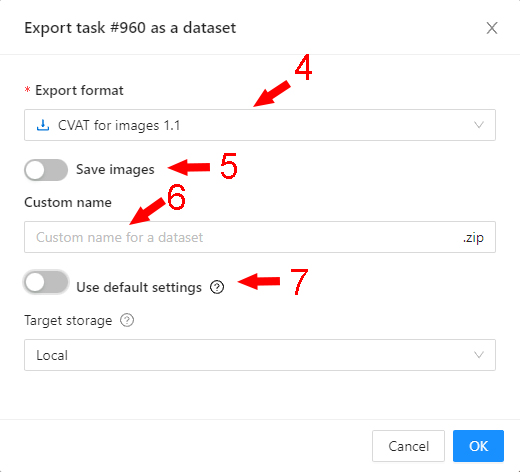

- 28: Export annotations and data from CVAT

- 28.1: CVAT for image

- 28.2: Datumaro

- 28.3: LabelMe

- 28.4: MOT

- 28.5: MOTS

- 28.6: COCO

- 28.7: COCO Keypoints

- 28.8: Pascal VOC

- 28.9: Segmentation Mask

- 28.10: YOLO

- 28.11: YOLOv8

- 28.12: YOLOv8-Classification

- 28.13: ImageNet

- 28.14: Wider Face

- 28.15: CamVid

- 28.16: VGGFace2

- 28.17: Market-1501

- 28.18: ICDAR13/15

- 28.19: Open Images

- 28.20: Cityscapes

- 28.21: KITTI

- 28.22: LFW

- 29: XML annotation format

- 30: Shortcuts

- 31: Filter

- 32: Contextual images

- 33: Shape grouping

- 34: Dataset Manifest

- 35: Data preparation on the fly

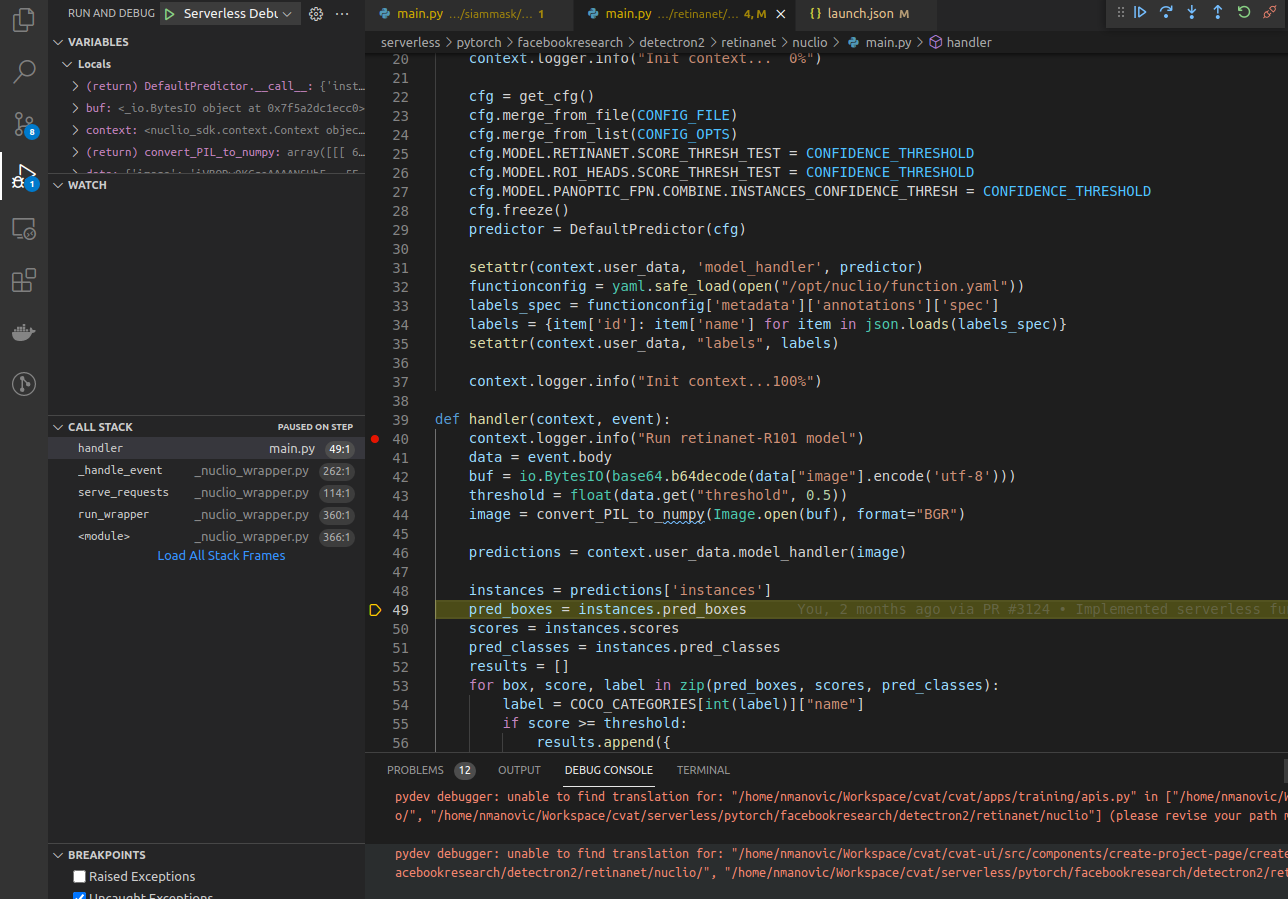

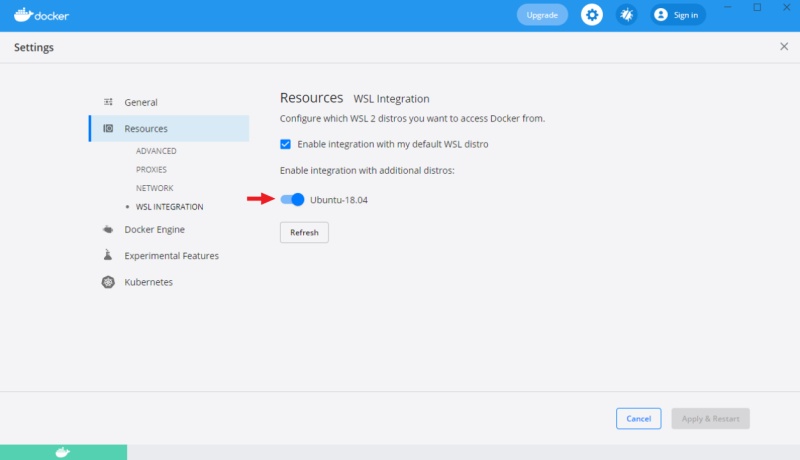

- 36: Serverless tutorial

1 - Projects page

Projects page

On this page you can create a new project, create a project from a backup, and also see the created projects.

In the upper left corner there is a search bar, using which you can find the project by project name, assignee etc. In the upper right corner there are sorting, quick filters and filter.

Filter

Applying filter disables the quick filter.

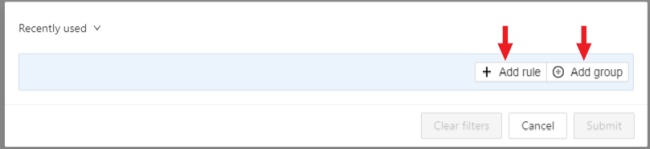

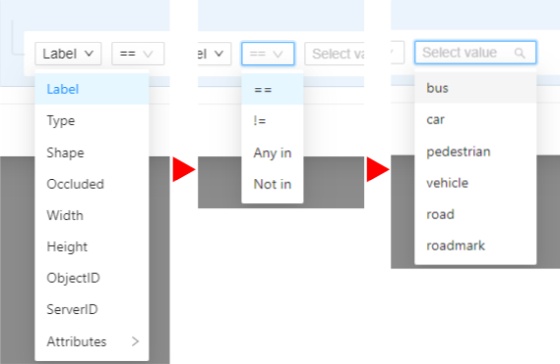

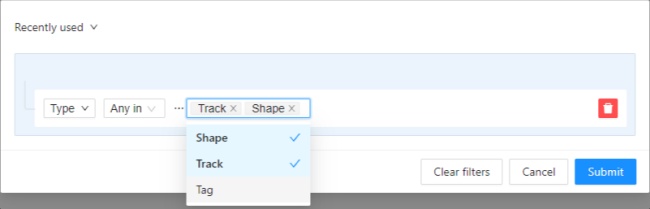

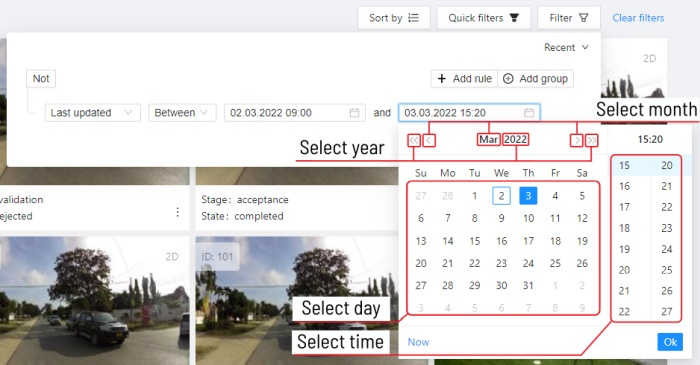

The filter works similarly to the filters for annotation, you can create rules from properties, operators and values and group rules into groups. For more details, see the filter section. Learn more about date and time selection.

For clear all filters press Clear filters.

Supported properties for projects list

| Properties | Supported values | Description |

|---|---|---|

Assignee |

username | Assignee is the user who is working on the project, task or job. (is specified on task page) |

Owner |

username | The user who owns the project, task, or job |

Last updated |

last modified date and time (or value range) | The date can be entered in the dd.MM.yyyy HH:mm format or by selecting the date in the window that appears when you click on the input field |

ID |

number or range of job ID | |

Name |

name | On the tasks page - name of the task, on the project page - name of the project |

Create a project

At CVAT, you can create a project containing tasks of the same type. All tasks related to the project will inherit a list of labels.

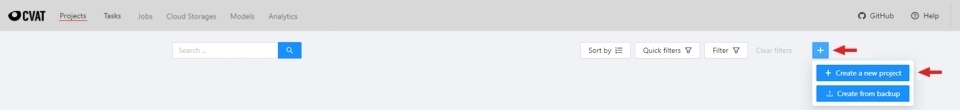

To create a project, go to the projects section by clicking on the Projects item in the top menu.

On the projects page, you can see a list of projects, use a search,

or create a new project by clicking on the + button and select Create New Project.

Note that the project will be created in the organization that you selected at the time of creation. Read more about organizations.

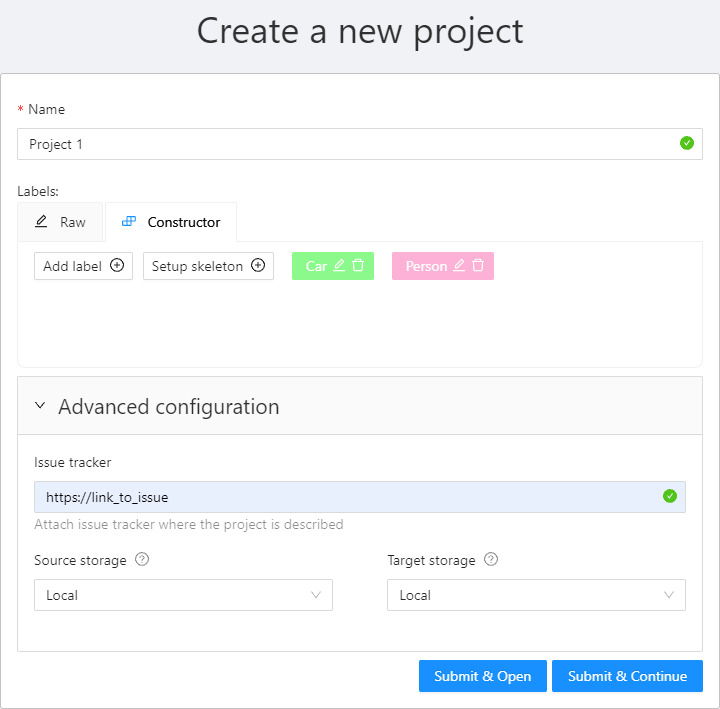

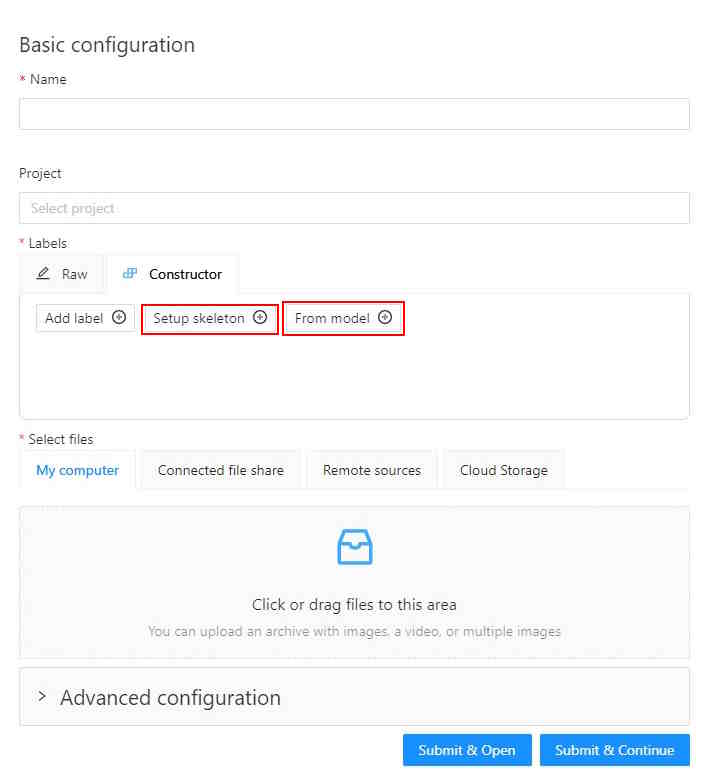

You can change: the name of the project, the list of labels (which will be used for tasks created as parts of this project) and a skeleton if it’s necessary. In advanced configuration also you can specify: a link to the issue, source and target storages. Learn more about creating a label list, creating the skeleton and attach cloud storage.

To save and open project click on Submit & Open button. Also you

can click on Submit & Continue button for creating several projects in sequence

Once created, the project will appear on the projects page. To open a project, just click on it.

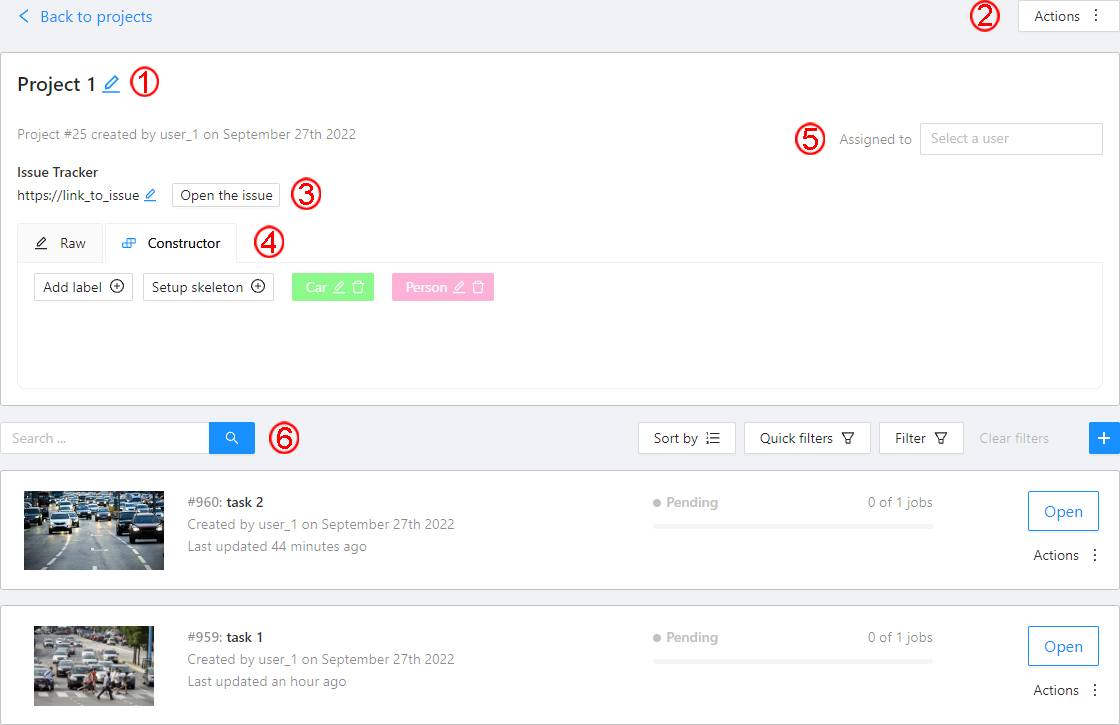

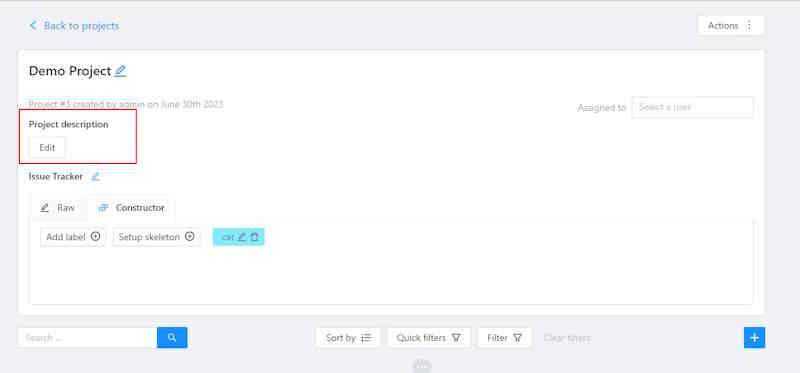

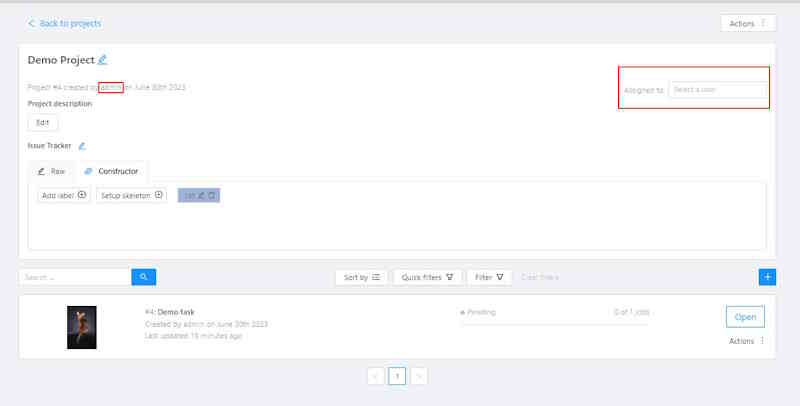

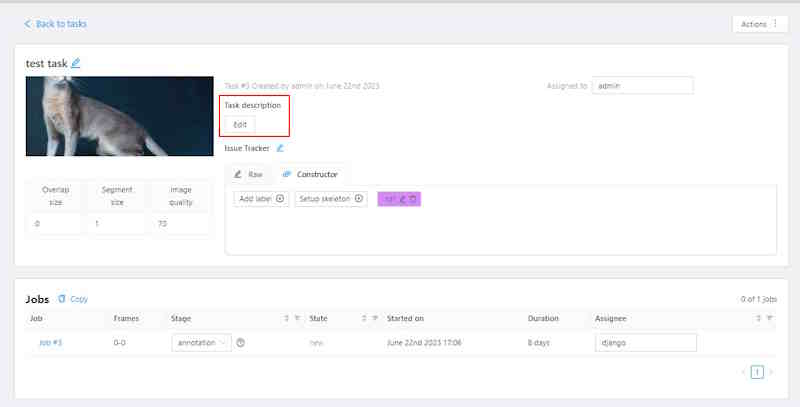

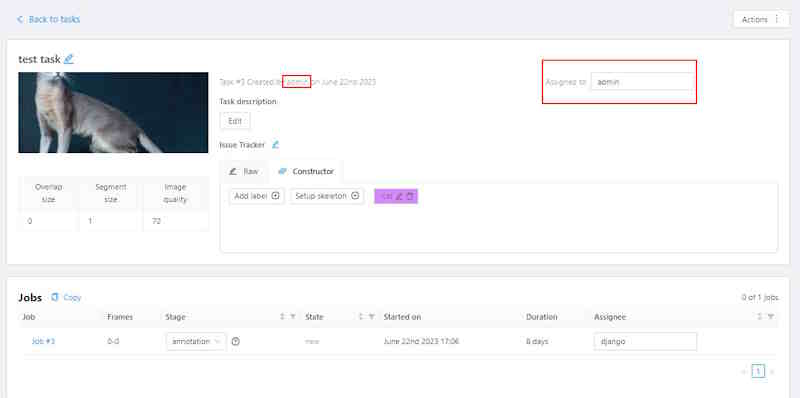

Here you can do the following:

-

Change the project’s title.

-

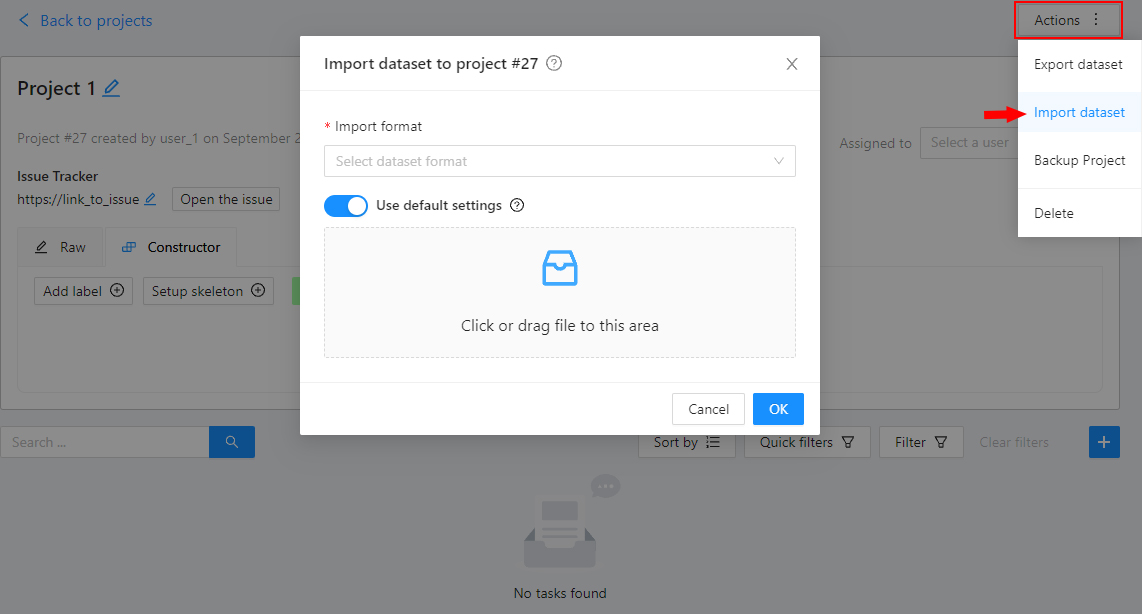

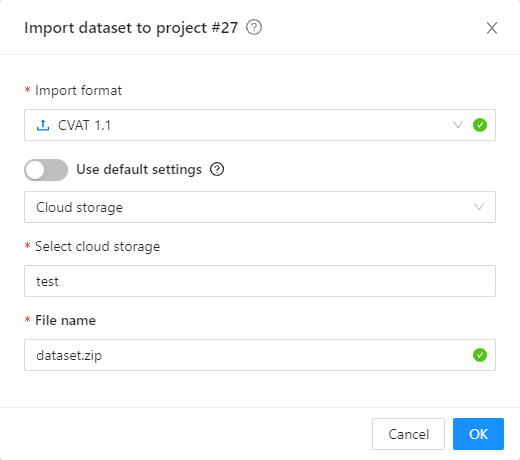

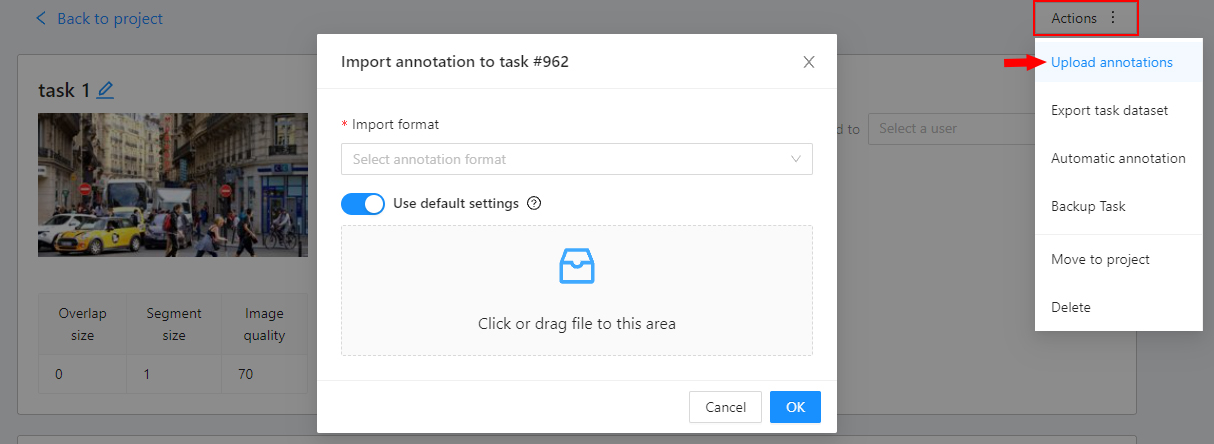

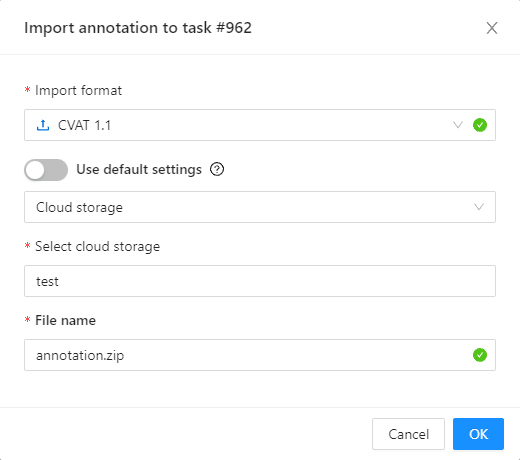

Open the

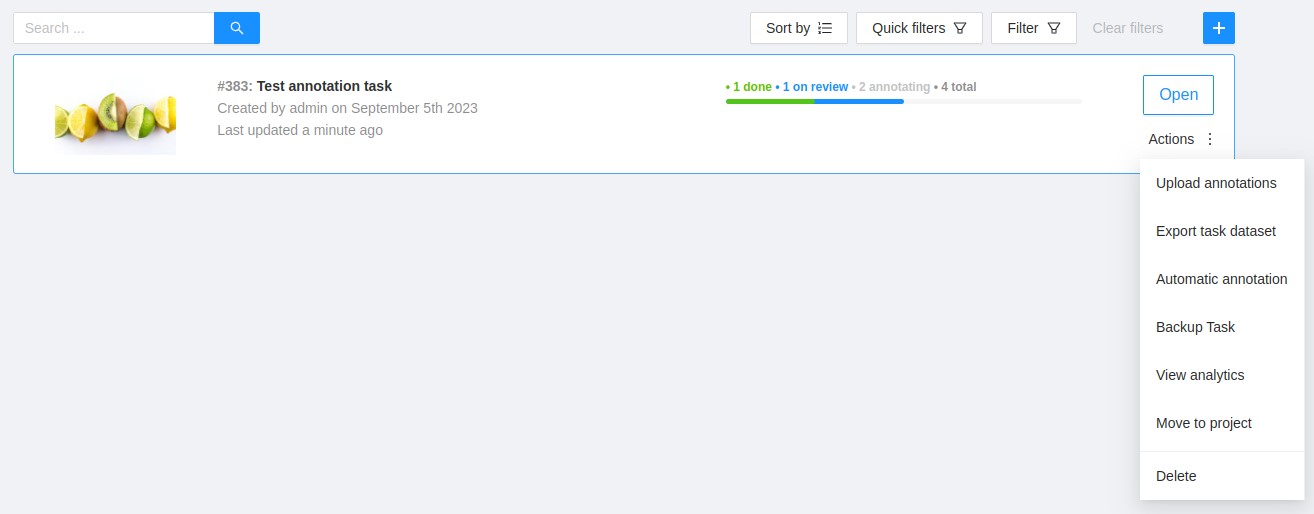

Actionsmenu. Each button is responsible for a specific function in theActionsmenu:Export dataset/Import dataset- download/upload annotations or annotations and images in a specific format. More information is available in the export/import datasets section.Backup project- make a backup of the project read more in the backup section.Delete- remove the project and all related tasks.

-

Change issue tracker or open issue tracker if it is specified.

-

Change labels and skeleton. You can add new labels or add attributes for the existing labels in the

Rawmode or theConstructormode. You can also change the color for different labels. By clickingSetup skeletonyou can create a skeleton for this project. -

Assigned to — is used to assign a project to a person. Start typing an assignee’s name and/or choose the right person out of the dropdown list.

-

Tasks— is a list of all tasks for a particular project, with the ability to search, sort and filter for tasks in the project. Read more about search. Read more about sorting and filter It is possible to choose a subset for tasks in the project. You can use the available options (Train,Test,Validation) or set your own.

2 - Organization

Organization is a feature for teams of several users who work together on projects and share tasks.

Create an Organization, invite your team members, and assign roles to make the team work better on shared tasks.

See:

- Personal workspace

- Create new organization

- Organization page

- Invite members into organization: menu and roles

- Delete organization

Personal workspace

The account’s default state is activated when no Organization is selected.

If you do not select an Organization, the system links all new resources directly to your personal account, that inhibits resource sharing with others.

When Personal workspace is selected, it will be marked with a tick in the menu.

Create new organization

To create an organization, do the following:

-

Log in to the CVAT.

-

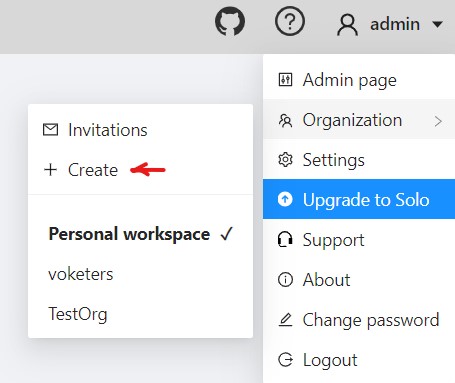

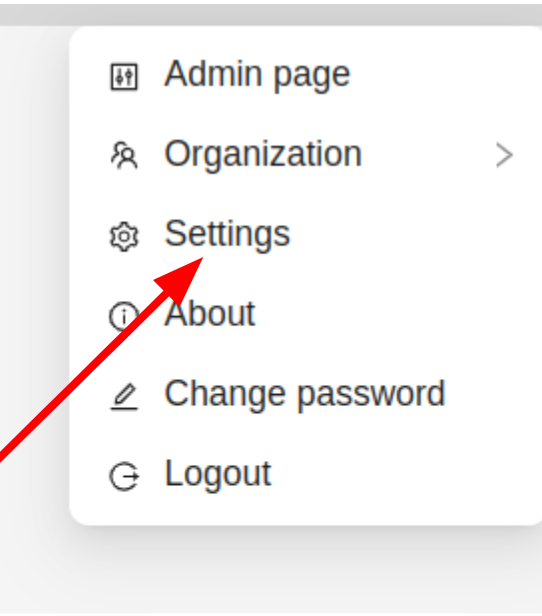

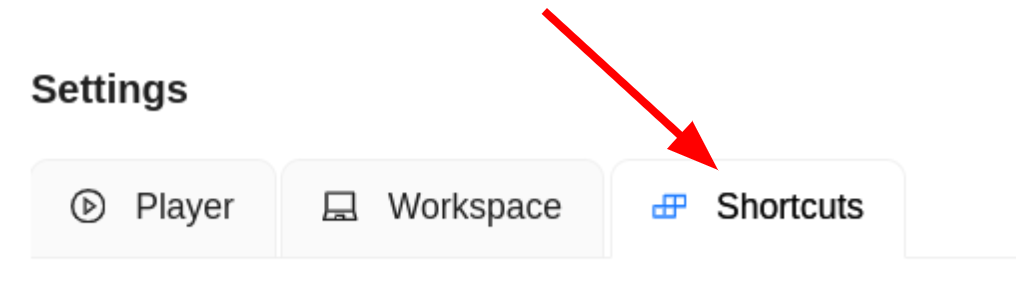

On the top menu, click your Username > Organization > + Create.

-

Fill in the following fields and click Submit.

| Field | Description |

|---|---|

| Short name | A name of the organization that will be displayed in the CVAT menu. |

| Full Name | Optional. Full name of the organization. |

| Description | Optional. Description of organization. |

| Optional. Your email. | |

| Phone number | Optional. Your phone number. |

| Location | Optional. Organization address. |

Upon creation, the organization page will open automatically.

For future access to your organization, navigate to Username > Organization

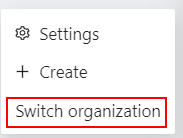

Note, that if you’ve created more than 10 organizations, a Switch organization line will appear in the drop-down menu.

Switching between organizations

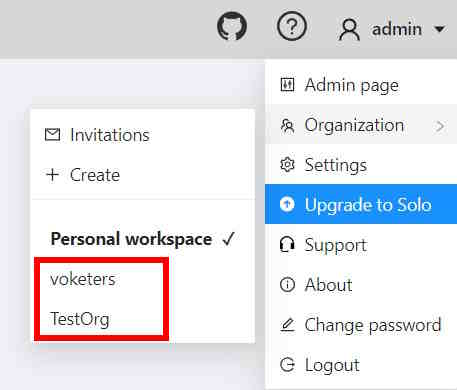

If you have more than one Organization, it is possible to switch between these Organizations at any given time.

Follow these steps:

- In the top menu, select your Username > Organization.

- From the drop-down menu, under the Personal space section, choose the desired Organization.

Note, that if you’ve created more than 10 organizations, a Switch organization line will appear in the drop-down menu.

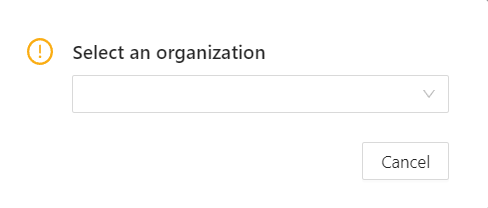

Click on it to see the Select organization dialog, and select organization from drop-down list.

Organization page

Organization page is a place, where you can edit the Organization information and manage Organization members.

Note that in order to access the organization page, you must first activate the organization (see Switching between organizations). Without activation, the organization page will remain inaccessible.

An organization is considered activated when it’s ticked in the drop-down menu and its name is visible in the top-right corner under the username.

To go to the Organization page, do the following:

- On the top menu, click your Username > Organization.

- In the drop-down menu, select Organization.

- In the drop-down menu, click Settings.

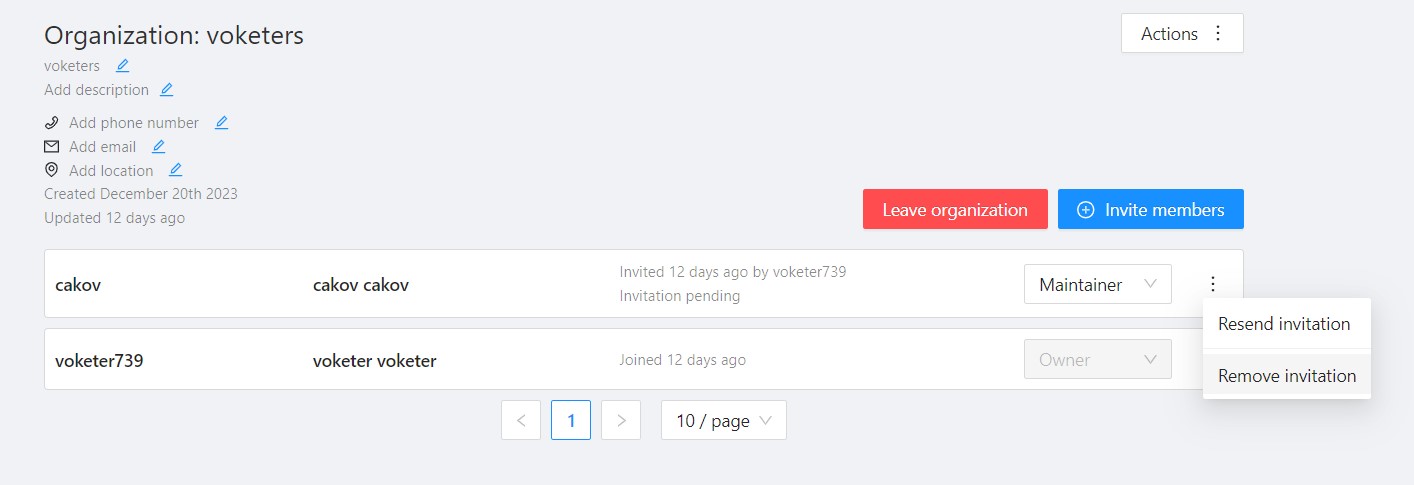

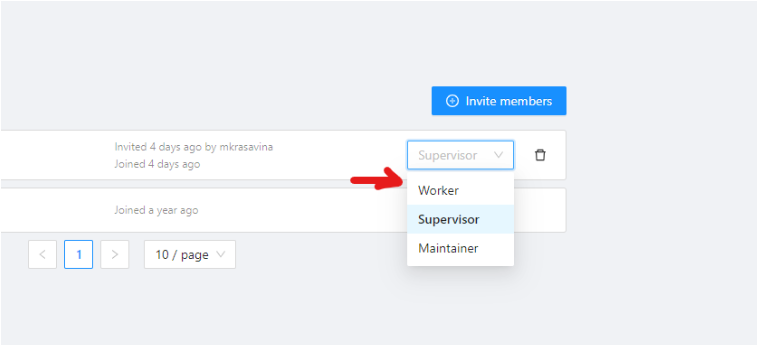

Invite members into organization: menu and roles

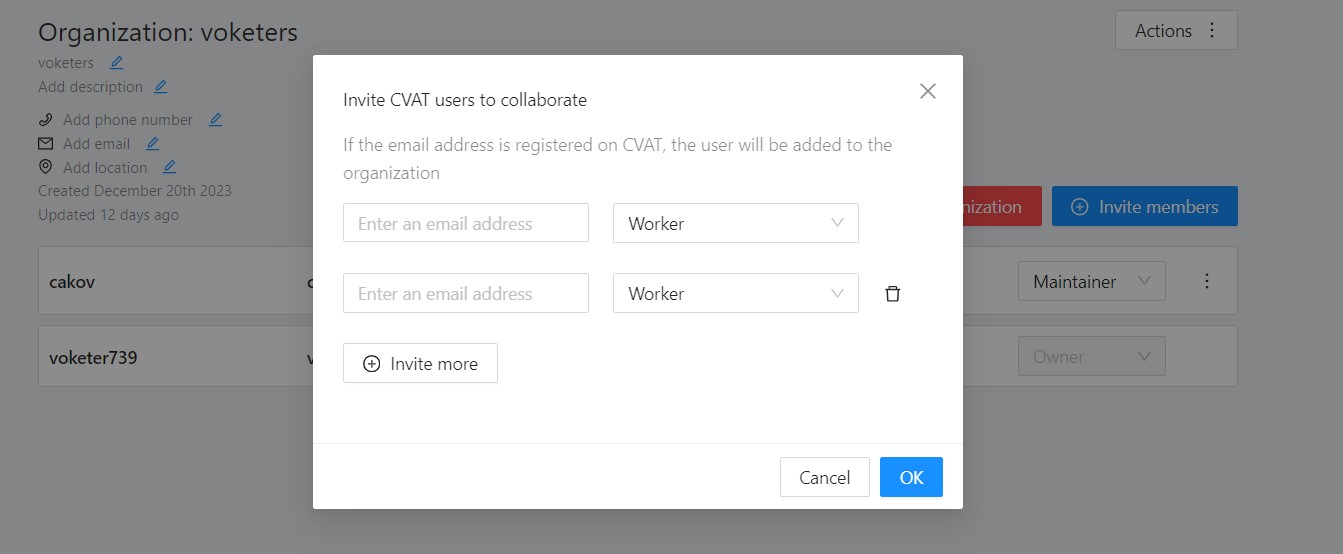

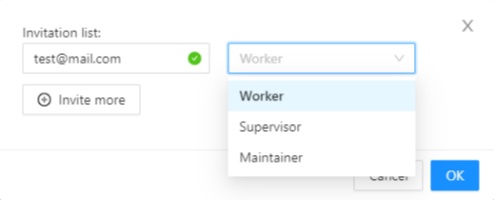

Invite members form is available from Organization page.

It has the following fields:

| Field | Description |

|---|---|

| Specifies the email address of the user who is being added to the Organization. | |

| Role drop-down list | Defines the role of the user which sets the level of access within the Organization: |

| Invite more | Button to add another user to the Organization. |

Members of Organization will appear on the Organization page:

The member of the organization can leave the organization by going to Organization page > Leave organization.

Inviting members to Organization

To invite members to Organization do the following:

-

Go to the Organization page, and click Invite members.

-

Fill in the form (see below).

-

Click Ok.

-

The person being invited will receive an email with the link.

-

Person must click the link and:

- If the invitee does not have the CVAT account, then set up an account.

- If the invitee has a CVAT account, then log in to the account.

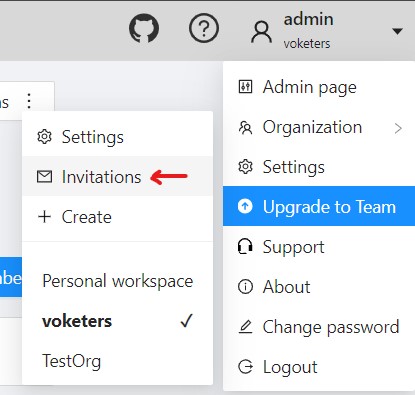

Invitations list

User can see the list of active invitations.

To see the list, Go to Username > Organization > Invitations.

You will see the page with the list of invitations.

You will also see pop-up notification the link to the page with invitations list.

Resending and removing invitations

The organization owner and maintainers can remove members, by clicking on the three dots, and selecting Remove invitation

The organization owner can remove members, by clicking on the Bin icon.

Delete organization

You can remove an organization that you created.

Note: Removing an organization will delete all related resources (annotations, jobs, tasks, projects, cloud storage, and so on).

To remove an organization, do the following:

- Go to the Organization page.

- In the top-right corner click Actions > Remove organization.

- Enter the short name of the organization in the dialog field.

- Click Remove.

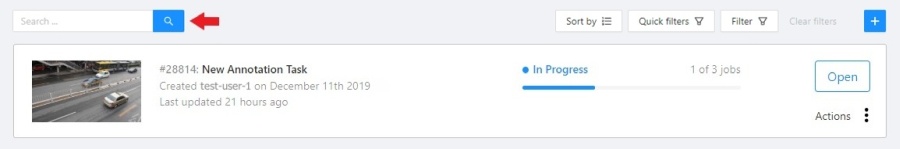

3 - Search

There are several options how to use the search.

- Search within all fields (owner, assignee, task name, task status, task mode). To execute enter a search string in search field.

- Search for specific fields. How to perform:

owner: admin- all tasks created by the user who has the substring “admin” in his nameassignee: employee- all tasks which are assigned to a user who has the substring “employee” in his namename: training- all tasks with the substring “training” in their namesmode: annotationormode: interpolation- all tasks with images or videos.status: annotationorstatus: validationorstatus: completed- search by statusid: 5- task with id = 5.

- Multiple filters. Filters can be combined (except for the identifier) using the keyword

AND:mode: interpolation AND owner: adminmode: annotation and status: annotation

The search is case insensitive.

4 - Shape mode (advanced)

Basic operations in the mode were described in section shape mode (basics).

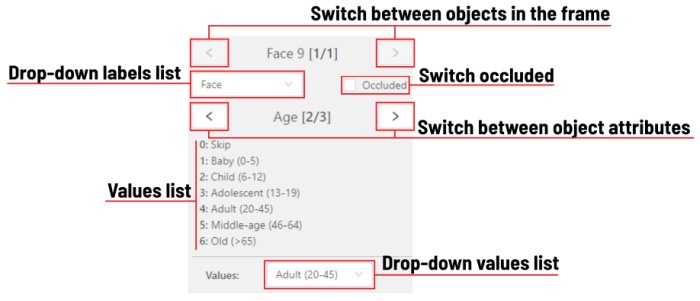

Occluded

Occlusion is an attribute used if an object is occluded by another object or

isn’t fully visible on the frame. Use Q shortcut to set the property

quickly.

Example: the three cars on the figure below should be labeled as occluded.

If a frame contains too many objects and it is difficult to annotate them

due to many shapes placed mostly in the same place, it makes sense

to lock them. Shapes for locked objects are transparent, and it is easy to

annotate new objects. Besides, you can’t change previously annotated objects

by accident. Shortcut: L.

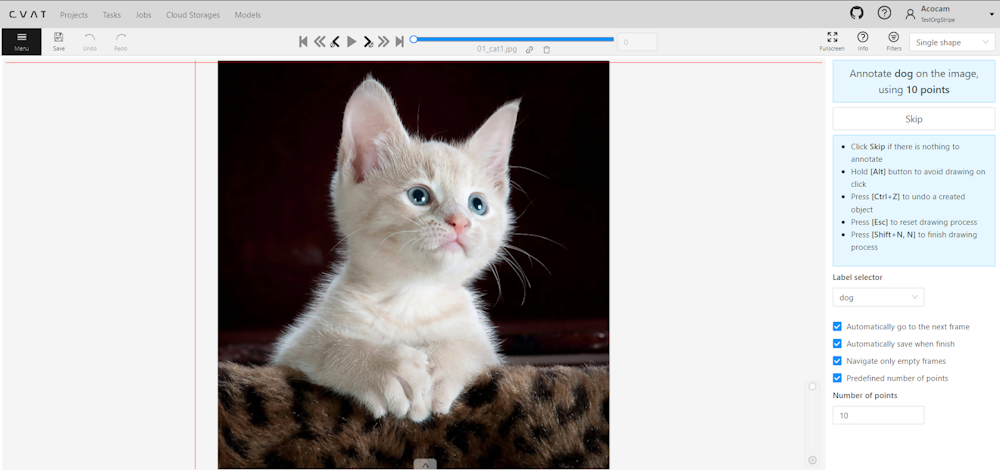

5 - Single Shape

The CVAT Single Shape annotation mode accelerates the annotation process and enhances workflow efficiency for specific scenarios.

By using this mode you can label objects with a chosen annotation shape and label when an image contains only a single object. By eliminating the necessity to select tools from the sidebar and facilitating quicker navigation between images without the reliance on hotkeys, this feature makes the annotation process significantly faster.

See:

- Single Shape mode annotation interface

- Annotating in Single Shape mode

- Query parameters

- Video tutorial

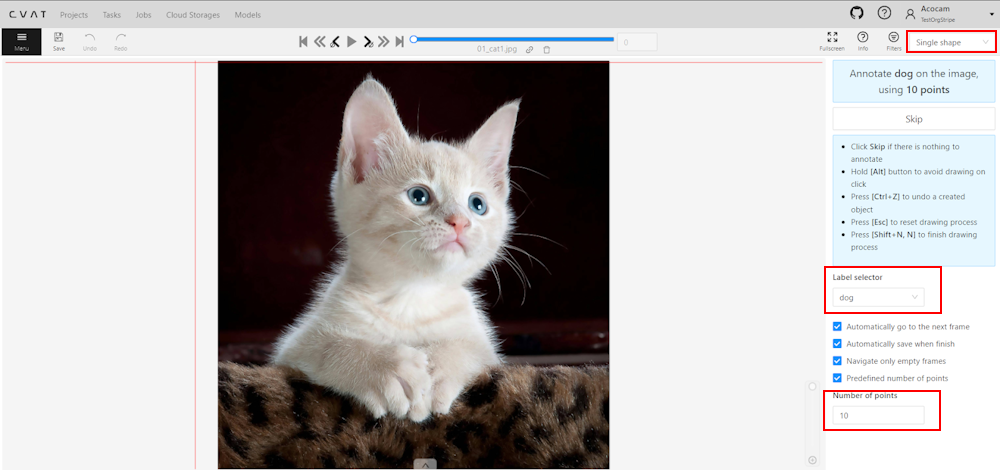

Single Shape mode annotation interface

A set of controls in the interface of the Single Shape annotation mode may vary depends on different settings.

Images below displays the complete interface, featuring all available fields; as mentioned above, certain fields may be absent depending on the scenario.

For instance, when annotating with rectangles, the Number of points field will not appear, and if annotating a single class, the Labels selector will be omitted.

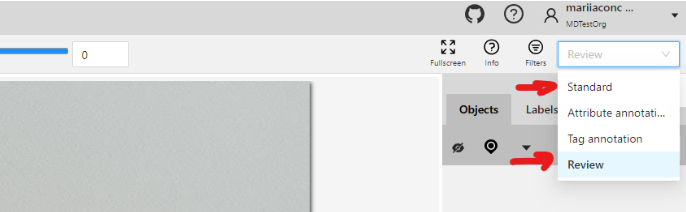

To access Single Shape mode, open the job, navigate to the top right corner, and from the drop-down menu, select Single Shape.

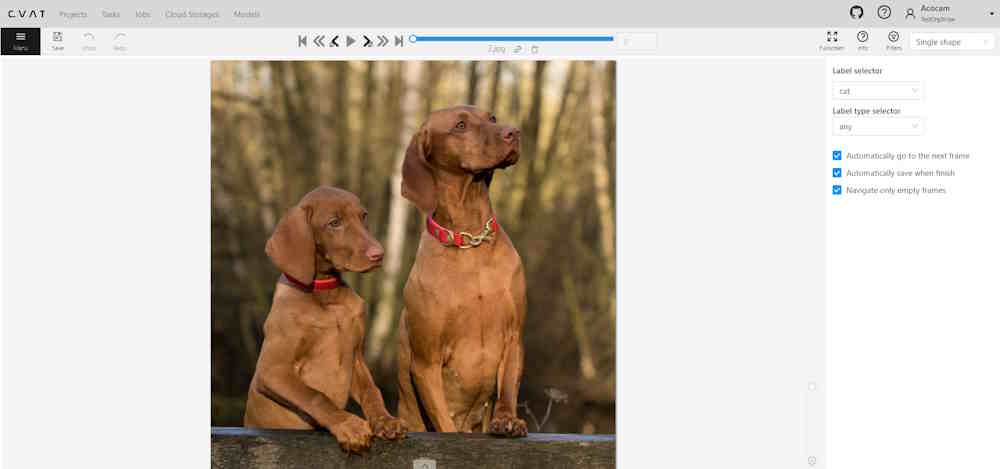

The interface will be different if the shape type was set to Any in the label Constructor:

The Single Shape annotation mode has the following fields:

| Feature | Explanation |

|---|---|

| Prompt for Shape and Label | Displays the selected shape and label for the annotation task, for example: “Annotate cat on the image using rectangle”. |

| Skip Button | Enables moving to the next frame without annotating the current one, particularly useful when the frame does not have anything to be annotated. |

| List of Hints | Offers guidance on using the interface effectively, including: - Click Skip for frames without required annotations. - Hold the Alt button to avoid unintentional drawing (e.g. when you want only move the image). - Use the Ctrl+Z combination to undo the last action if needed. - Use the Esc button to completely reset the current drawing progress. |

| Label selector | Allows for the selection of different labels (cat, or dog in our example) for annotation within the interface. |

| Label type selector | A drop-down list to select type of the label (rectangle, ellipse, etc). Only visible when the type of the shape is Any. |

| Options to Enable or Disable | Provides configurable options to streamline the annotation process, such as: - Automatically go to the next frame. - Automatically save when finish. - Navigate only empty frames. - Predefined number of points - Specific to polyshape annotations, enabling this option auto-completes a shape once a predefined number of points is reached. Otherwise, pressing N is required to finalize the shape. |

| Number of Points | Applicable for polyshape annotations, indicating the number of points to use for image annotation. |

Annotating in Single Shape mode

To annotate in Single Shape mode, follow these steps:

- Open the job and switch to Single Shape mode.

- Annotate the image based on the selected shape. For more information on shapes, see Annotation Tools.

- (Optional) If the image does not contain any objects to annotate, click Skip at the top of the right panel.

- Submit your work.

Query parameters

Also, we introduced additional query parameters, which you may append to the job link, to initialize the annotation process and automate workflow:

| Query Parameter | Possible Values | Explanation |

|---|---|---|

defaultWorkspace |

Workspace identifier (e.g., single_shape, tags, review, attributes) |

Specifies the workspace to be used initially, streamlining the setup for different annotation tasks. |

defaultLabel |

A string representation of a label (label name) | Sets a default label for the annotation session, facilitating consistency across similar tasks. |

defaultPointsCount |

Integer - number of points for polyshapes | Defines a preset number of points for polyshape annotations, optimizing the annotation process. |

You can combine these parameters to customize the workspace for an annotator, for example:

/tasks/<tid>/jobs/<jid>?defaultWorkspace=single_shape&defaultLabel=dog&defaultPointsCount=10

Will open the following job:

Video tutorial

For a better understanding of how Single Shape mode operates, we recommend watching the following tutorial.

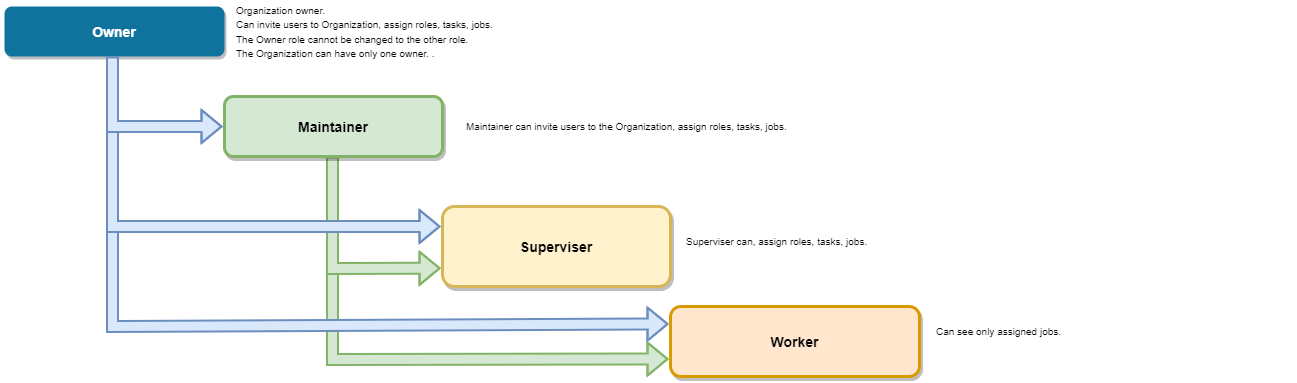

6 - CVAT User roles

CVAT offers two distinct types of roles:

- Global Roles: These are universal roles that apply to the entire system. Anyone who logs into the CVAT.ai platform is automatically assigned a global role. It sets the basic permissions that every registered user has across CVAT.ai, regardless of their specific tasks or responsibilities.

- Organization Roles: These roles determine what a user can do within the Organization, allowing for more tailored access based on the user’s specific duties and responsibilities.

Organization roles complement global roles by determining the visibility of different resources for example, tasks or jobs.

Limits: Limits are applicable to all users of the CVAT.ai Cloud Platform using the Free plan and can be lifted upon choosing a subscription.

All roles are predefined and cannot be modified through the user interface.

However, within the self-hosted solution, roles can be adjusted using .rego

files stored in cvat/apps/*/rules/.

Rego is a declarative language employed for defining

OPA (Open Policy Agent) policies, and its syntax is detailed

in the OPA documentation.

Note: Once you’ve made changes to the

.regofiles, you must rebuild and restart the Docker Compose for those changes to be applied. In this scenario, be sure to include thedocker-compose.dev.ymlcompose configuration file when executing the Docker Compose command.

See:

Global roles in CVAT.ai

Note: Global roles can be adjusted only on self-hosted solution.

CVAT has implemented three Global roles, categorized as user Groups. These roles are:

| Role | Description |

|---|---|

| Administrator | An administrator possesses unrestricted access to the CVAT instance and all activities within this instance. The administrator has visibility over all tasks and projects, with the ability to modify or manage each comprehensively. This role is exclusive to self-hosted instances, ensuring comprehensive oversight and control. |

| User (default role) |

A User is a default role who is assigned to any user who is registered in CVAT*. Users can view and manage all tasks and projects within their registered accounts, but their activities are subject to specific limitations, see Free plan. * If a user, that did not have a CVAT account, has been invited to the organization by the organization owner or maintainer, it will be automatically assigned the Organization role and will be subject to the role’s limitations when operating within the Organization. |

| Worker | Workers are limited to specific functionalities and do not have the permissions to create tasks, assign roles, or perform other administrative actions. Their activities are primarily focused on viewing and interacting with the content within the boundaries of their designated roles (validation or annotation of the jobs). |

Organization roles in CVAT.ai

Organization Roles are available only within the CVAT Organization.

Organization roles are assigned when users are invited to the Organization.

There are the following roles available in CVAT:

| Role | Description |

|---|---|

| Owner | The Owner is the person who created the Organization. The Owner role is assigned to the creator of the organization by default. This role has maximum capabilities and cannot be changed or assigned to the other user. The Owner has no extra restrictions in the organization and is only limited by the chosen organization plan (see Free and Team plans). Owners can invite other users to the Organization and assign roles to the invited users so the team can collaborate. |

| Maintainer | The maintainer is the person who can invite users to organization, create and update tasks and jobs, and see all tasks within the organization. Maintainer has complete access to Cloud Storages, and the ability to modify members and their roles. |

| Supervisor | The supervisor is a manager role. Supervisor can create and assign jobs, tasks, and projects to the Organization members. Supervisor cannot invite new members and modify members roles. |

| Worker | Workers’ primary focus is actual annotation and reviews. They are limited to specific functionalities and has access only to the jobs assigned to them. |

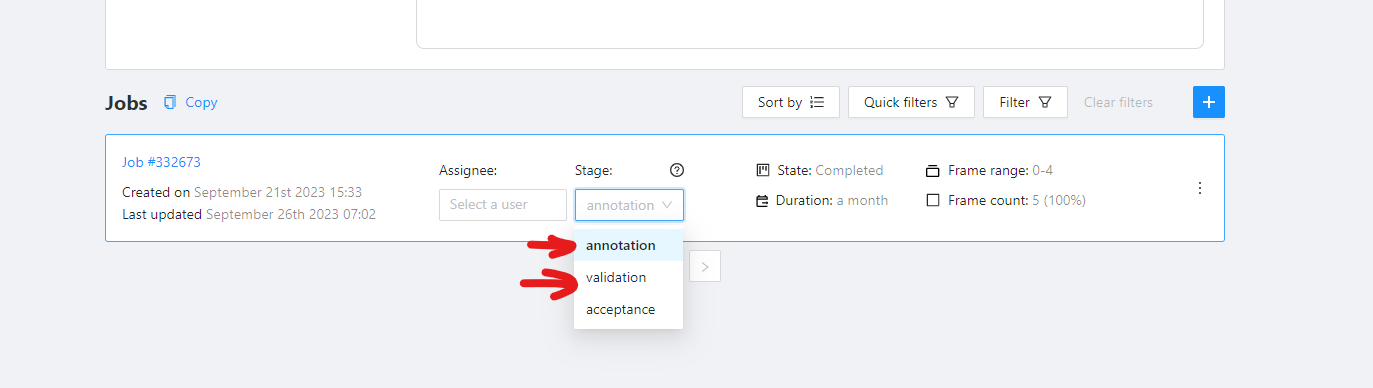

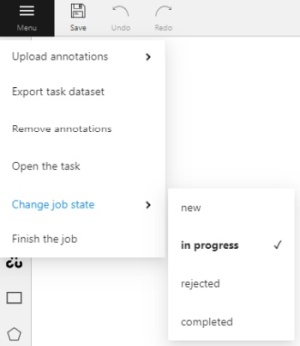

Job Stage

Job Stage can be assigned to any team member.

Stages are not roles.

Jobs can have an assigned user (with any role) and that Assignee will perform a Stage specific work which is to annotate, validate, or accept the job.

Job Stage can be:

| Stage | Description |

|---|---|

| Annotation | Provides access to annotation tools. Assignees will be able to see their assigned jobs and annotate them. By default, assignees with the Annotation stage cannot report annotation errors or issues. |

| Validation | Grants access to QA tools. Assignees will see their assigned jobs and can validate them while also reporting issues. By default, assignees with the Validation stage cannot correct errors or annotate datasets. |

| Acceptance | Does not grant any additional access or change the annotator’s interface. It just marks the job as done. |

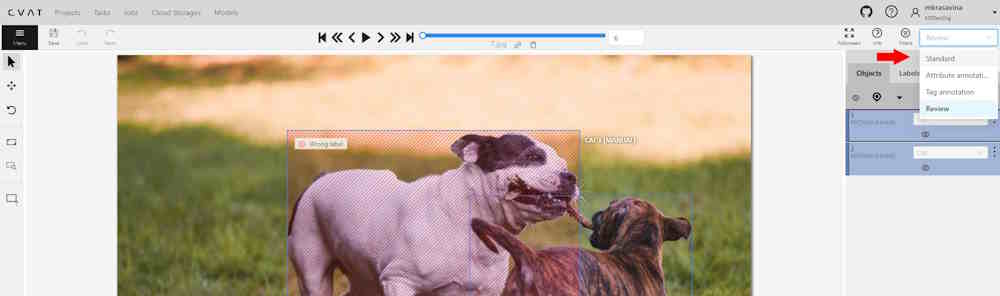

Any Assignee can modify their assigned Stage specific functions via the annotation interface toolbar:

- Standard: switches interface to Annotation mode.

- Review: switches interface to the Validation mode.

7 - Track mode (advanced)

Basic operations in the mode were described in section track mode (basics).

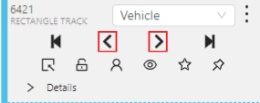

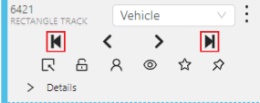

Shapes that were created in the track mode, have extra navigation buttons.

-

These buttons help to jump to the previous/next keyframe.

-

The button helps to jump to the initial frame and to the last keyframe.

You can use the Split function to split one track into two tracks:

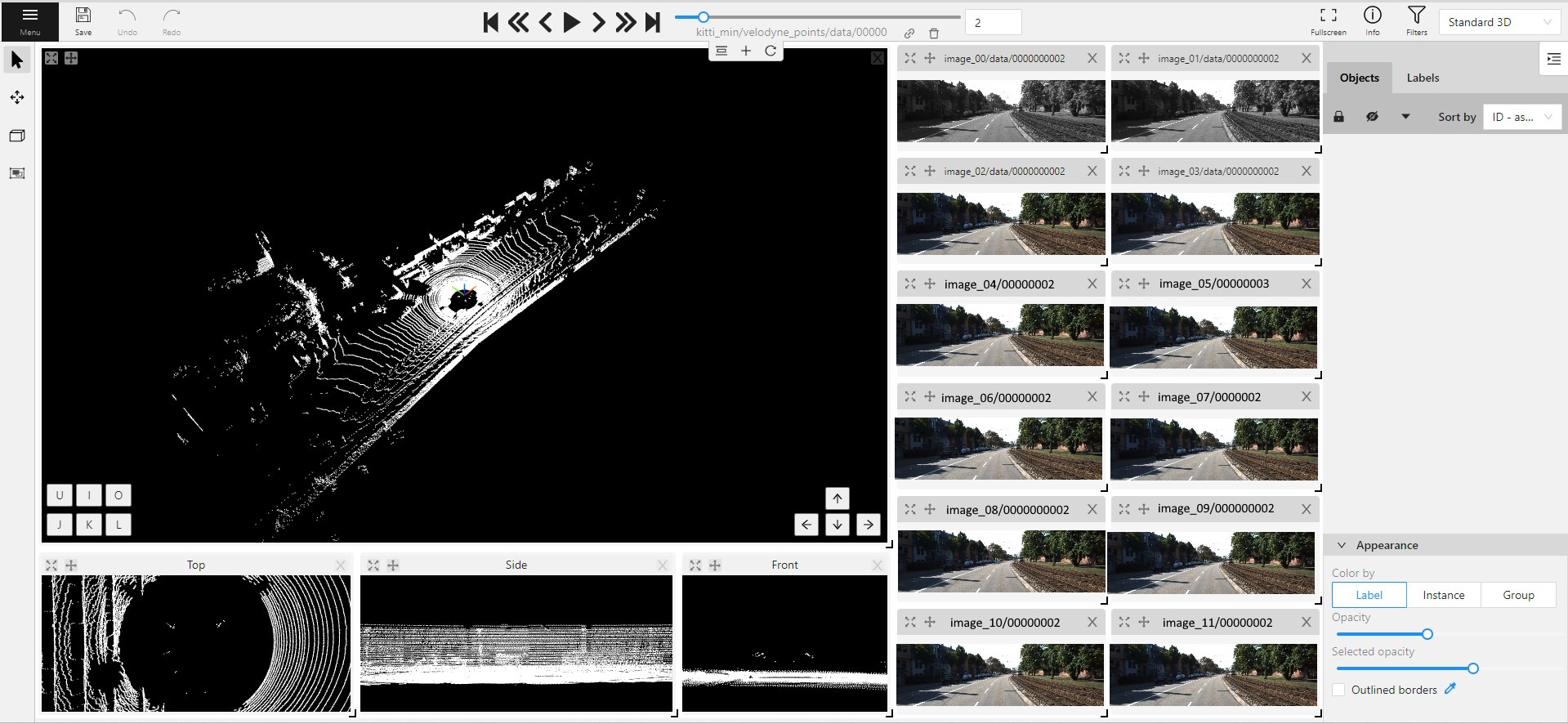

8 - 3D Object annotation (advanced)

As well as 2D-task objects, 3D-task objects support the ability to change appearance, attributes, properties and have an action menu. Read more in objects sidebar section.

Moving an object

If you hover the cursor over a cuboid and press Shift+N, the cuboid will be cut,

so you can paste it in other place (double-click to paste the cuboid).

Copying

As well as in 2D task you can copy and paste objects by Ctrl+C and Ctrl+V,

but unlike 2D tasks you have to place a copied object in a 3D space (double click to paste).

Image of the projection window

You can copy or save the projection-window image by left-clicking on it and selecting a “save image as” or “copy image”.

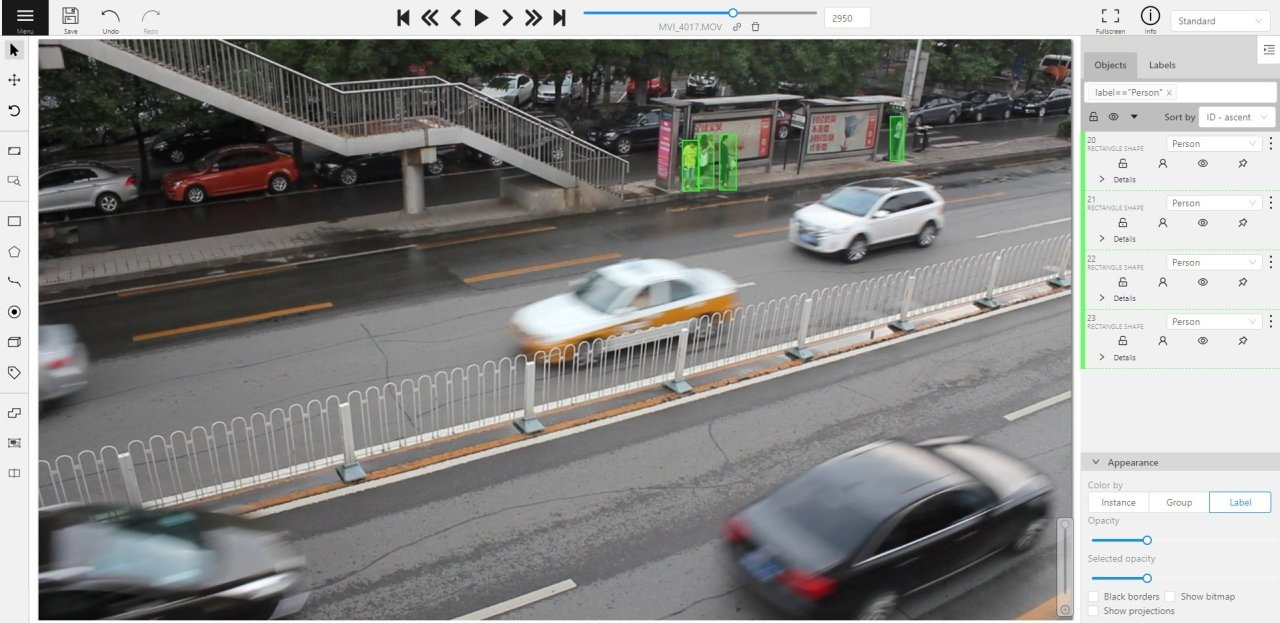

9 - Attribute annotation mode (advanced)

Basic operations in the mode were described in section attribute annotation mode (basics).

It is possible to handle lots of objects on the same frame in the mode.

It is more convenient to annotate objects of the same type. In this case you can apply

the appropriate filter. For example, the following filter will

hide all objects except person: label=="Person".

To navigate between objects (person in this case),

use the following buttons switch between objects in the frame on the special panel:

or shortcuts:

Tab— go to the next objectShift+Tab— go to the previous object.

In order to change the zoom level, go to settings (press F3)

in the workspace tab and set the value Attribute annotation mode (AAM) zoom margin in px.

10 - Annotation with rectangles

To learn more about annotation using a rectangle, see the sections:

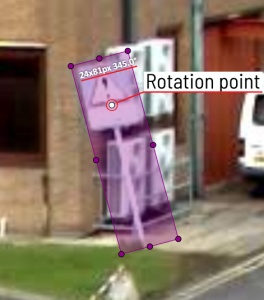

Rotation rectangle

To rotate the rectangle, pull on the rotation point. Rotation is done around the center of the rectangle.

To rotate at a fixed angle (multiple of 15 degrees),

hold shift. In the process of rotation, you can see the angle of rotation.

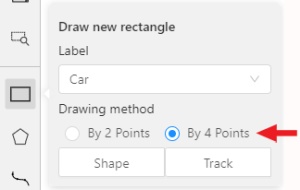

Annotation with rectangle by 4 points

It is an efficient method of bounding box annotation, proposed here. Before starting, you need to make sure that the drawing method by 4 points is selected.

Press Shape or Track for entering drawing mode. Click on four extreme points:

the top, bottom, left- and right-most physical points on the object.

Drawing will be automatically completed right after clicking the fourth point.

Press Esc to cancel editing.

11 - Annotation with polygons

11.1 - Manual drawing

It is used for semantic / instance segmentation.

Before starting, you need to select Polygon on the controls sidebar and choose the correct Label.

- Click

Shapeto enter drawing mode. There are two ways to draw a polygon: either create points by clicking or by dragging the mouse on the screen while holdingShift.

| Clicking points | Holding Shift+Dragging |

|---|---|

|

|

- When

Shiftisn’t pressed, you can zoom in/out (when scrolling the mouse wheel) and move (when clicking the mouse wheel and moving the mouse), you can also delete the previous point by right-clicking on it. - You can use the

Selected opacityslider in theObjects sidebarto change the opacity of the polygon. You can read more in the Objects sidebar section. - Press

Nagain or click theDonebutton on the top panel for completing the shape. - After creating the polygon, you can move the points or delete them by right-clicking and selecting

Delete pointor clicking with pressedAltkey in the context menu.

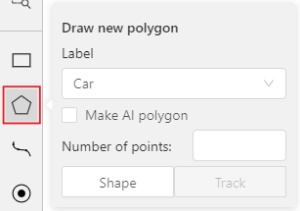

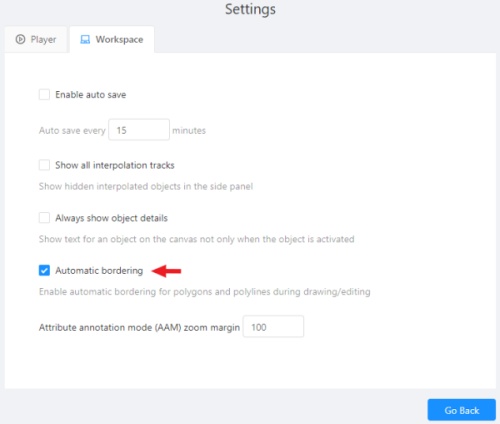

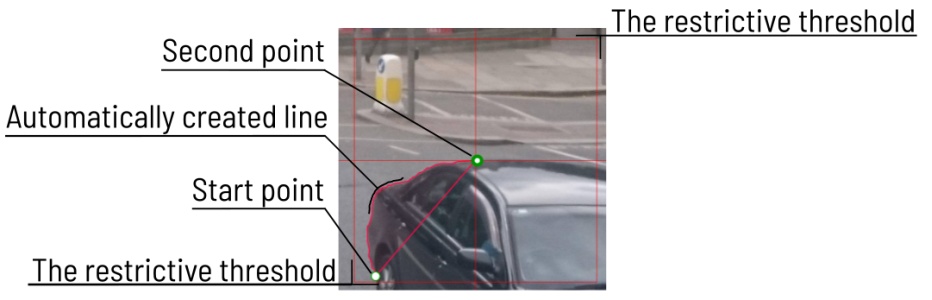

11.2 - Drawing using automatic borders

You can use auto borders when drawing a polygon. Using automatic borders allows you to automatically trace the outline of polygons existing in the annotation.

-

To do this, go to settings -> workspace tab and enable

Automatic Borderingor pressCtrlwhile drawing a polygon.

-

Start drawing / editing a polygon.

-

Points of other shapes will be highlighted, which means that the polygon can be attached to them.

-

Define the part of the polygon path that you want to repeat.

-

Click on the first point of the contour part.

-

Then click on any point located on part of the path. The selected point will be highlighted in purple.

-

Click on the last point and the outline to this point will be built automatically.

Besides, you can set a fixed number of points in the Number of points field, then

drawing will be stopped automatically. To enable dragging you should right-click

inside the polygon and choose Switch pinned property.

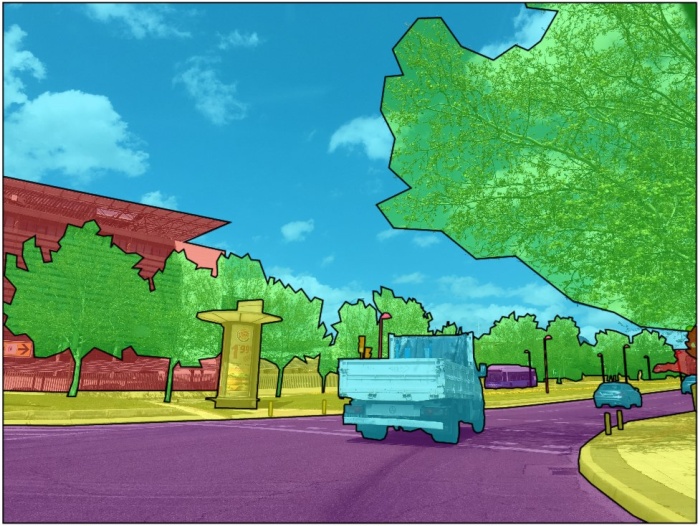

Below you can see results with opacity and black stroke:

If you need to annotate small objects, increase Image Quality to

95 in Create task dialog for your convenience.

11.3 - Edit polygon

To edit a polygon you have to click on it while holding Shift, it will open the polygon editor.

-

In the editor you can create new points or delete part of a polygon by closing the line on another point.

-

When

Intelligent polygon croppingoption is activated in the settings, CVAT considers two criteria to decide which part of a polygon should be cut off during automatic editing.- The first criteria is a number of cut points.

- The second criteria is a length of a cut curve.

If both criteria recommend to cut the same part, algorithm works automatically, and if not, a user has to make the decision. If you want to choose manually which part of a polygon should be cut off, disable

Intelligent polygon croppingin the settings. In this case after closing the polygon, you can select the part of the polygon you want to leave.

-

You can press

Escto cancel editing.

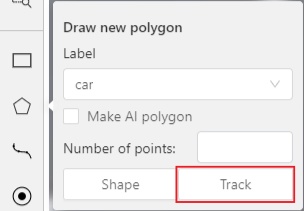

11.4 - Track mode with polygons

Polygons in the track mode allow you to mark moving objects more accurately other than using a rectangle (Tracking mode (basic); Tracking mode (advanced)).

-

To create a polygon in the track mode, click the

Trackbutton.

-

Create a polygon the same way as in the case of Annotation with polygons. Press

Nor click theDonebutton on the top panel to complete the polygon. -

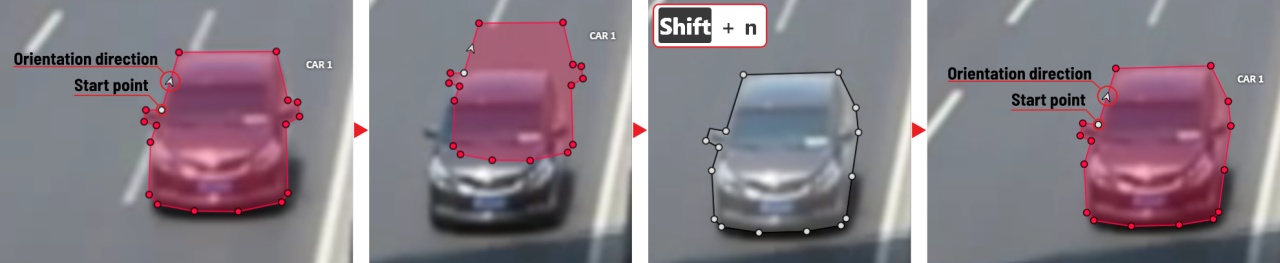

Pay attention to the fact that the created polygon has a starting point and a direction, these elements are important for annotation of the following frames.

-

After going a few frames forward press

Shift+N, the old polygon will disappear and you can create a new polygon. The new starting point should match the starting point of the previously created polygon (in this example, the top of the left mirror). The direction must also match (in this example, clockwise). After creating the polygon, pressNand the intermediate frames will be interpolated automatically.

-

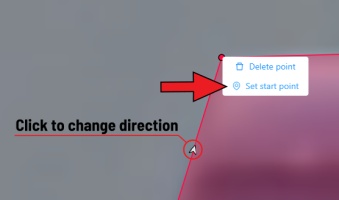

If you need to change the starting point, right-click on the desired point and select

Set starting point. To change the direction, right-click on the desired point and select switch orientation.

There is no need to redraw the polygon every time using Shift+N,

instead you can simply move the points or edit a part of the polygon by pressing Shift+Click.

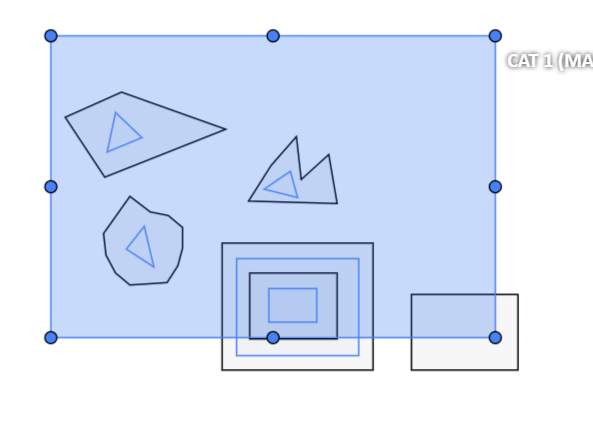

11.5 - Creating masks

Cutting holes in polygons

Currently, CVAT does not support cutting transparent holes in polygons. However, it is possible to generate holes in exported instance and class masks. To do this, one needs to define a background class in the task and draw holes with it as additional shapes above the shapes needed to have holes:

The editor window:

Remember to use z-axis ordering for shapes by [-] and [+, =] keys.

Exported masks:

Notice that it is currently impossible to have a single instance number for internal shapes (they will be merged into the largest one and then covered by “holes”).

Creating masks

There are several formats in CVAT that can be used to export masks:

Segmentation Mask(PASCAL VOC masks)CamVidMOTSICDARCOCO(RLE-encoded instance masks, guide)Datumaro

An example of exported masks (in the Segmentation Mask format):

Important notices:

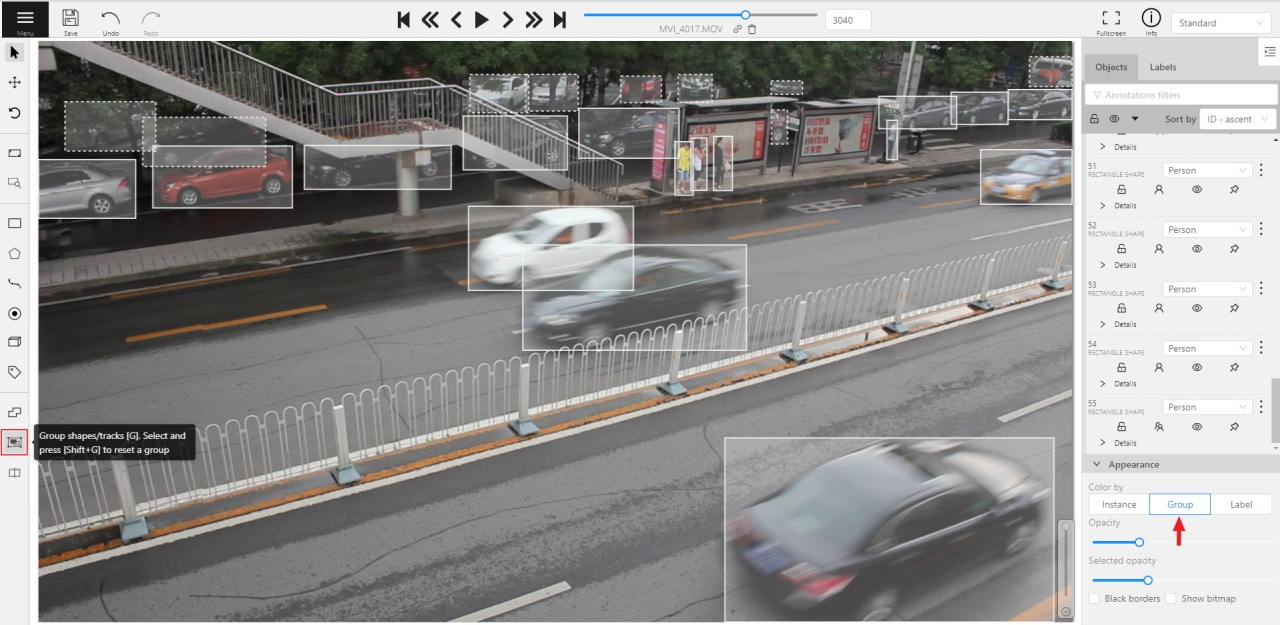

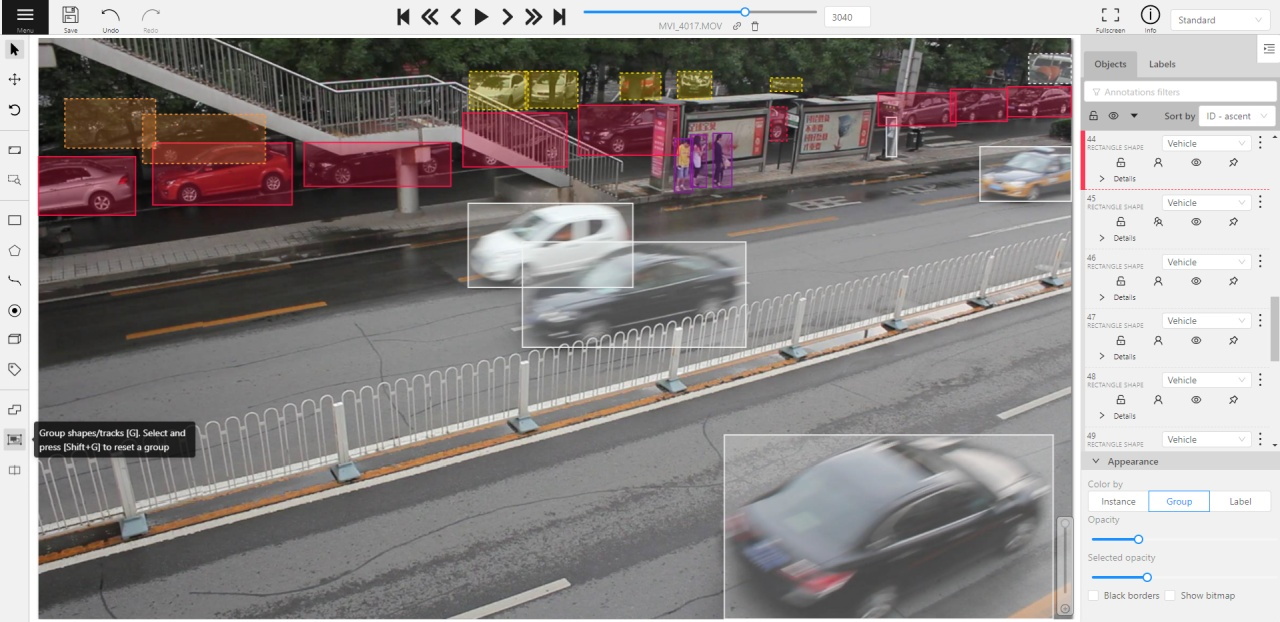

- Both boxes and polygons are converted into masks

- Grouped objects are considered as a single instance and exported as a single mask (label and attributes are taken from the largest object in the group)

Class colors

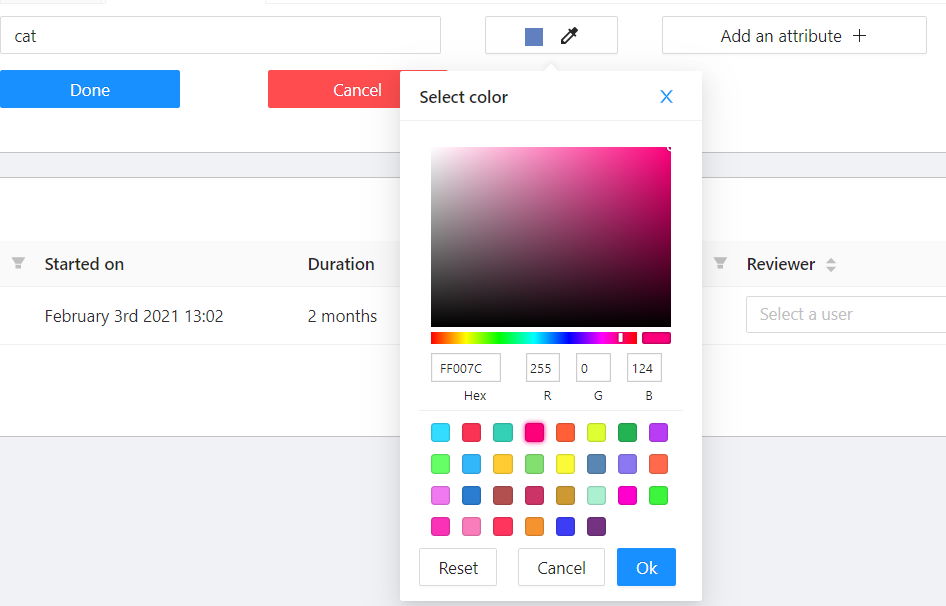

All the labels have associated colors, which are used in the generated masks. These colors can be changed in the task label properties:

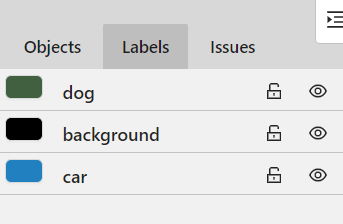

Label colors are also displayed in the annotation window on the right panel, where you can show or hide specific labels (only the presented labels are displayed):

A background class can be:

- A default class, which is implicitly-added, of black color (RGB 0, 0, 0)

backgroundclass with any color (has a priority, name is case-insensitive)- Any class of black color (RGB 0, 0, 0)

To change background color in generated masks (default is black),

change background class color to the desired one.

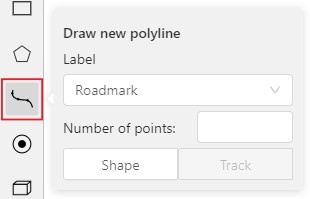

12 - Annotation with polylines

It is used for road markup annotation etc.

Before starting, you need to select the Polyline. You can set a fixed number of points

in the Number of points field, then drawing will be stopped automatically.

Click Shape to enter drawing mode. There are two ways to draw a polyline —

you either create points by clicking or by dragging a mouse on the screen while holding Shift.

When Shift isn’t pressed, you can zoom in/out (when scrolling the mouse wheel)

and move (when clicking the mouse wheel and moving the mouse), you can delete

previous points by right-clicking on it.

Press N again or click the Done button on the top panel to complete the shape.

You can delete a point by clicking on it with pressed Ctrl or right-clicking on a point

and selecting Delete point. Click with pressed Shift will open a polyline editor.

There you can create new points(by clicking or dragging) or delete part of a polygon closing

the red line on another point. Press Esc to cancel editing.

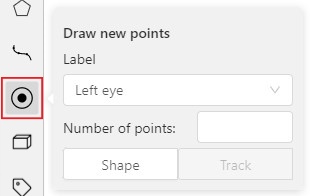

13 - Annotation with points

13.1 - Points in shape mode

It is used for face, landmarks annotation etc.

Before you start you need to select the Points. If necessary you can set a fixed number of points

in the Number of points field, then drawing will be stopped automatically.

Click Shape to entering the drawing mode. Now you can start annotation of the necessary area.

Points are automatically grouped — all points will be considered linked between each start and finish.

Press N again or click the Done button on the top panel to finish marking the area.

You can delete a point by clicking with pressed Ctrl or right-clicking on a point and selecting Delete point.

Clicking with pressed Shift will open the points shape editor.

There you can add new points into an existing shape. You can zoom in/out (when scrolling the mouse wheel)

and move (when clicking the mouse wheel and moving the mouse) while drawing. You can drag an object after

it has been drawn and change the position of individual points after finishing an object.

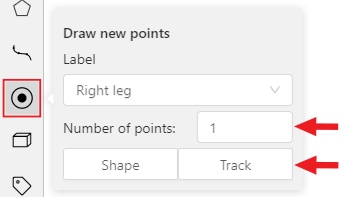

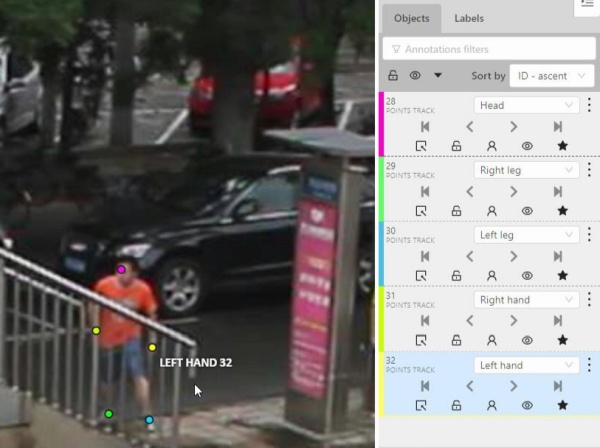

13.2 - Linear interpolation with one point

You can use linear interpolation for points to annotate a moving object:

-

Before you start, select the

Points. -

Linear interpolation works only with one point, so you need to set

Number of pointsto 1. -

After that select the

Track.

-

Click

Trackto enter the drawing mode left-click to create a point and after that shape will be automatically completed.

-

Move forward a few frames and move the point to the desired position, this way you will create a keyframe and intermediate frames will be drawn automatically. You can work with this object as with an interpolated track: you can hide it using the

Outside, move around keyframes, etc.

-

This way you’ll get linear interpolation using the

Points.

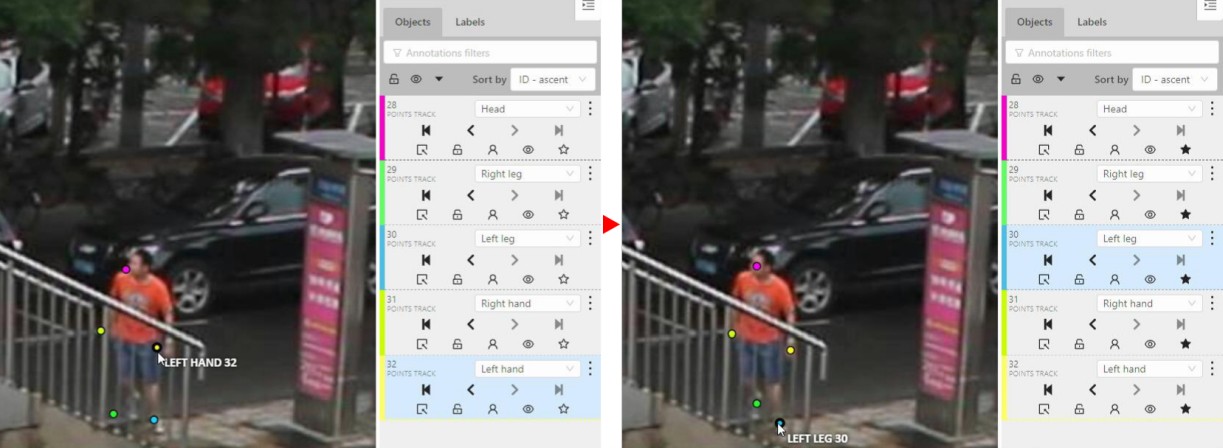

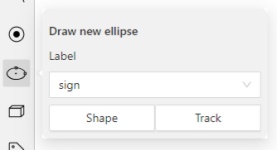

14 - Annotation with ellipses

It is used for road sign annotation etc.

First of all you need to select the ellipse on the controls sidebar.

Choose a Label and click Shape or Track to start drawing. An ellipse can be created the same way as

a rectangle, you need to specify two opposite points,

and the ellipse will be inscribed in an imaginary rectangle. Press N or click the Done button on the top panel

to complete the shape.

You can rotate ellipses using a rotation point in the same way as rectangles.

Annotation with ellipses video tutorial

15 - Annotation with cuboids

It is used to annotate 3 dimensional objects such as cars, boxes, etc… Currently the feature supports one point perspective and has the constraint where the vertical edges are exactly parallel to the sides.

15.1 - Creating the cuboid

Before you start, you have to make sure that Cuboid is selected and choose a drawing method ”from rectangle” or “by 4 points”.

Drawing cuboid by 4 points

Choose a drawing method “by 4 points” and click Shape to enter the drawing mode. There are many ways to draw a cuboid. You can draw the cuboid by placing 4 points, after that the drawing will be completed automatically. The first 3 points determine the plane of the cuboid while the last point determines the depth of that plane. For the first 3 points, it is recommended to only draw the 2 closest side faces, as well as the top and bottom face.

A few examples:

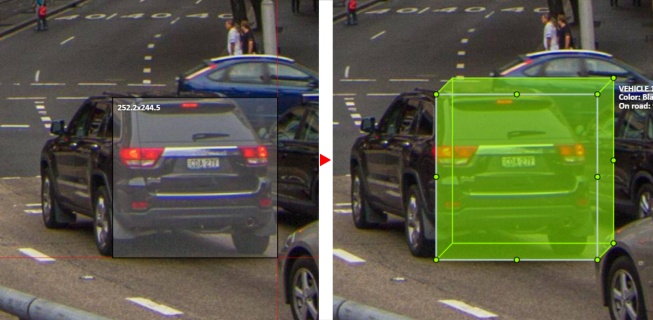

Drawing cuboid from rectangle

Choose a drawing method “from rectangle” and click Shape to enter the drawing mode. When you draw using the rectangle method, you must select the frontal plane of the object using the bounding box. The depth and perspective of the resulting cuboid can be edited.

Example:

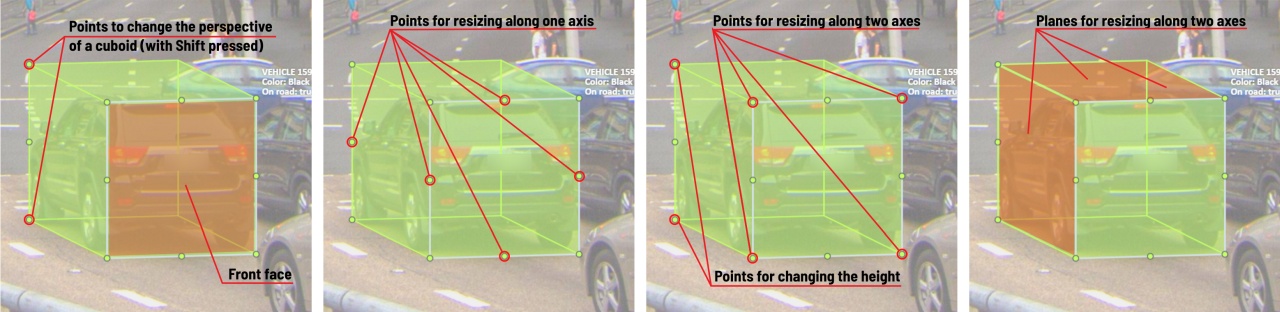

15.2 - Editing the cuboid

The cuboid can be edited in multiple ways: by dragging points, by dragging certain faces or by dragging planes. First notice that there is a face that is painted with gray lines only, let us call it the front face.

You can move the cuboid by simply dragging the shape behind the front face. The cuboid can be extended by dragging on the point in the middle of the edges. The cuboid can also be extended up and down by dragging the point at the vertices.

To draw with perspective effects it should be assumed that the front face is the closest to the camera.

To begin simply drag the points on the vertices that are not on the gray/front face while holding Shift.

The cuboid can then be edited as usual.

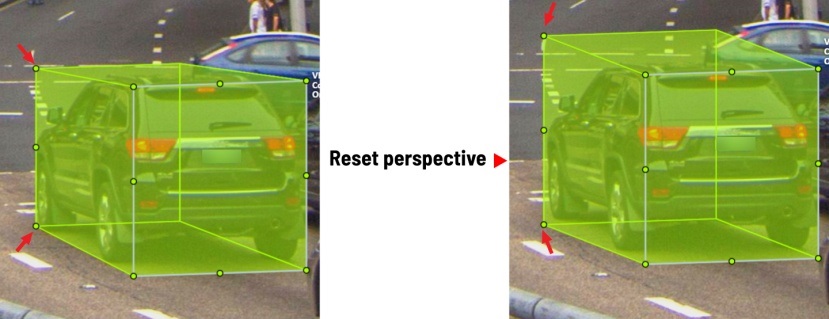

If you wish to reset perspective effects, you may right click on the cuboid,

and select Reset perspective to return to a regular cuboid.

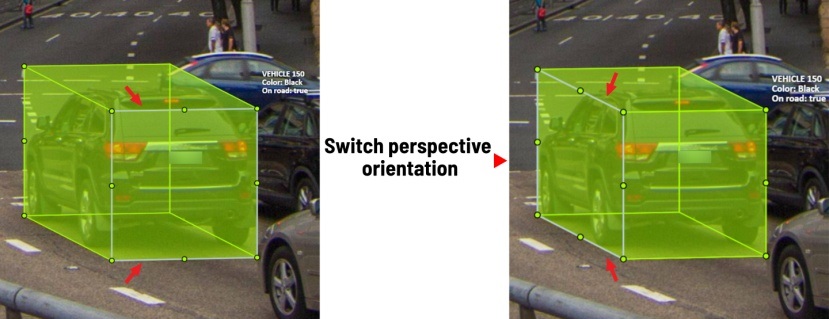

The location of the gray face can be swapped with the adjacent visible side face.

You can do it by right clicking on the cuboid and selecting Switch perspective orientation.

Note that this will also reset the perspective effects.

Certain faces of the cuboid can also be edited, these faces are: the left, right and dorsal faces, relative to the gray face. Simply drag the faces to move them independently from the rest of the cuboid.

You can also use cuboids in track mode, similar to rectangles in track mode (basics and advanced) or Track mode with polygons

16 - Annotation with skeletons

In this guide, we delve into the efficient process of annotating complex structures through the implementation of Skeleton annotations.

Skeletons serve as annotation templates for annotating complex objects with a consistent structure, such as human pose estimation or facial landmarks.

A Skeleton is composed of numerous points (also referred to as elements), which may be connected by edges. Each point functions as an individual object, possessing unique attributes and properties like color, occlusion, and visibility.

Skeletons can be exported in two formats: CVAT for image and COCO Keypoints.

Note: that skeletons’ labels cannot be imported in a label-less project by importing a dataset. You need to define the labels manually before the import.

See:

- Adding Skeleton manually

- Adding Skeleton labels from the model

- Annotation with Skeletons

- Automatic annotation with Skeletons

- Editing skeletons on the canvas

- Editing skeletons on the sidebar

Adding Skeleton manually

To start annotating using skeletons, you need to set up a Skeleton task in Configurator:

To open Configurator, when creating a task, click on the Setup skeleton button if you want to set up the skeleton manually, or From model if you want to add skeleton labels from a model.

Skeleton Configurator

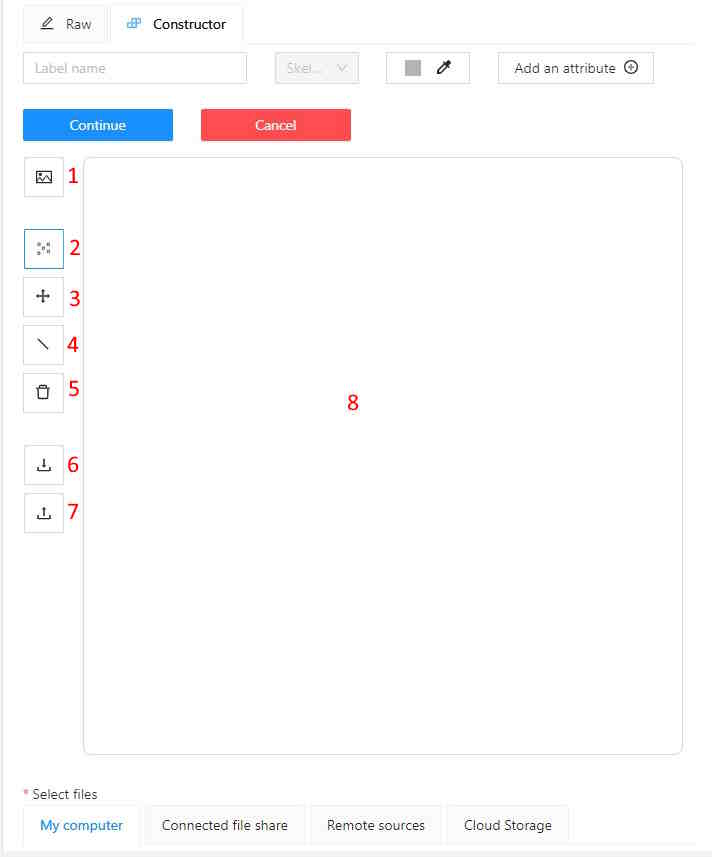

The skeleton Configurator is a tool to build skeletons for annotation. It has the following fields:

| Number | Name | Description |

|---|---|---|

| 1 | Upload background image | (Optional) Use it to upload a background image, to draw a skeleton on top of it. |

| 2 | Add point | Use it to add Skeleton points to the Drawing area (8). |

| 3 | Click and drag | Use it to move points across the Drawing area (8). |

| 4 | Add edge | Use it to add edge on the Drawing area (8) to connect the points (2). |

| 5 | Remove point | Use it to remove points. Click on Remove point and then on any point (2) on the Drawing area (8) to delete the point. |

| 6 | Download skeleton | Use it to download created skeleton in .SVG format. |

| 7 | Upload skeleton | Use it to upload skeleton in .SVG format. |

| 8 | Drawing area | Use it as a canvas to draw a skeleton. |

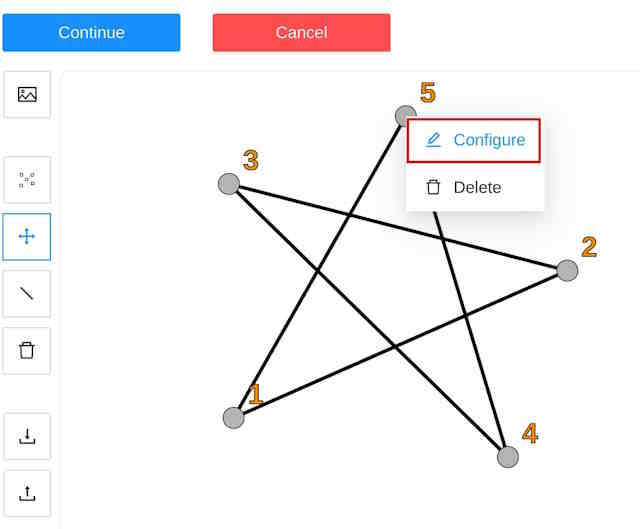

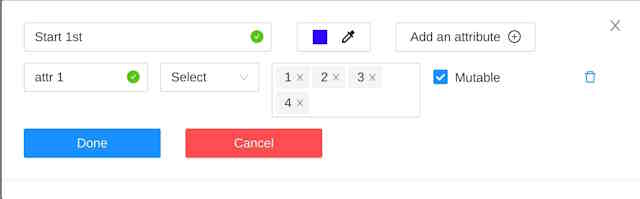

Configuring Skeleton points

You can name labels, set attributes, and change the color of each point of the skeleton.

To do this, right-click on the skeleton point and select Configure:

In the opened menu, you can change the point setting. It is similar to adding labels and attributes of the regular task:

A Skeleton point can only exist within its parent Skeleton.

Note that you cannot change the skeleton configuration for an existing task/project.

You can copy/insert skeleton configuration from the Raw tab of the label configurator.

Adding Skeleton labels manually

To create the Skeleton task, do the following:

- Open Configurator.

- (Optional) Upload background image.

- In the Label name field, enter the name of the label.

- (Optional) Add attribute

Note: you can add attributes exclusively to each point, for more information, see Configuring Skeleton points - Use Add point to add points to the Drawing area.

- Use Add edge to add edges between points.

- Upload files.

- Click:

- Submit & Open to create and open the task.

- Submit & Continue to submit the configuration and start creating a new task.

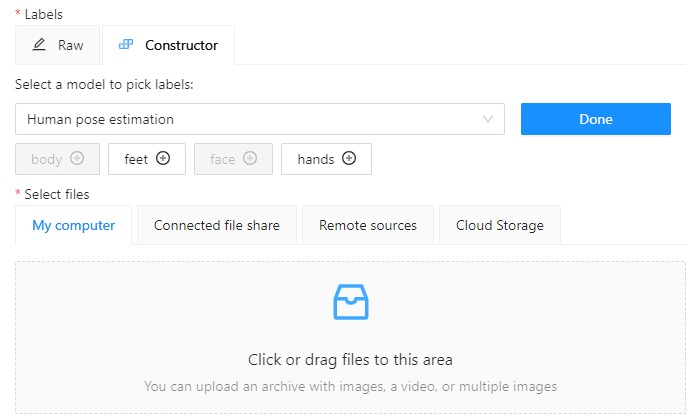

Adding Skeleton labels from the model

To add points from the model, and annotate do the following:

-

Open Basic configurator.

-

On the Constructor tab, click From model.

-

From the Select a model to pick labels select the

Human pose estimationmodel or others if available. -

Click on the model’s labels, you want to use.

Selected labels will become gray.

-

(Optional) If you want to adjust labels, within the label, click the Update attributes icon.

The Skeleton configurator will open, where you can configure the skeleton.

Note: Labels cannot be adjusted after the task/project is created. -

Click Done. The labels, that you selected, will appear in the labels window.

-

Upload data.

-

Click:

- Submit & Open to create and open the task.

- Submit & Continue to submit the configuration and start creating a new task.

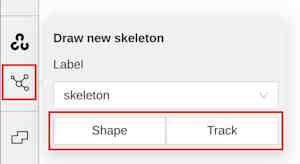

Annotation with Skeletons

To annotate with Skeleton, do the following

-

Open job.

-

On the tools panel select Draw new skeleton.

-

Select Track or Shape to annotate. without tracking.

-

Draw a skeleton on the image.

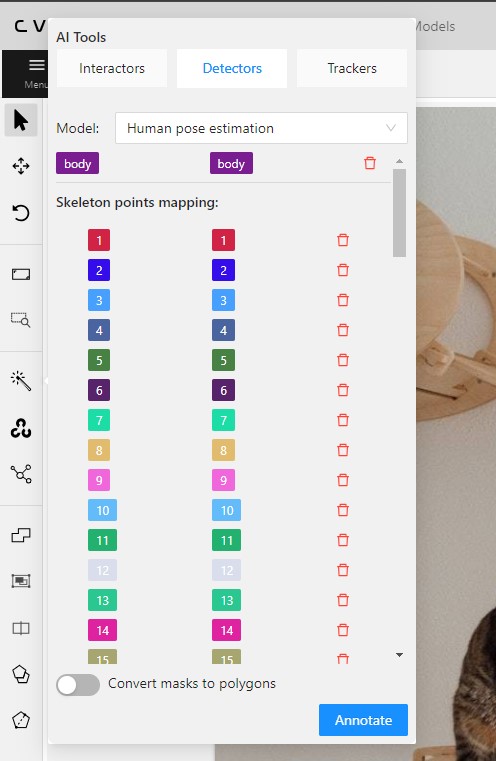

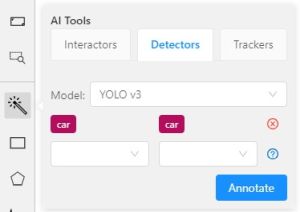

Automatic annotation with Skeletons

To automatically annotate with Skeleton, do the following

-

Open the job and on the tools panel select AI Tools > Detectors

-

From the drop-down list select the model. You will see a list of points to match and the name of the skeleton on the top of the list.

-

(Optional) By clicking on the Bin icon, you can remove any mapped item:

- A skeleton together with all points.

- Certain points from two mapped skeletons.

-

Click Annotate.

Editing skeletons on the canvas

A drawn skeleton is encompassed within a bounding box, it allows you to manipulate the skeleton as a regular bounding box, enabling actions such as dragging, resizing, or rotating:

Upon repositioning a point, the bounding box adjusts automatically, without affecting other points:

Additionally, Shortcuts are applicable to both the skeleton as a whole and its elements:

- To use a shortcut to the entire skeleton, hover over the bounding box and push the shortcut keyboard key. This action is applicable for shortcuts like the lock, occluded, pinned, keyframe, and outside for skeleton tracks.

- To use a shortcut to a specific skeleton point, hover over the point and push the shortcut keyboard key. The same list of shortcuts is available, with the addition of outside, which is also applicable to individual skeleton shape elements.

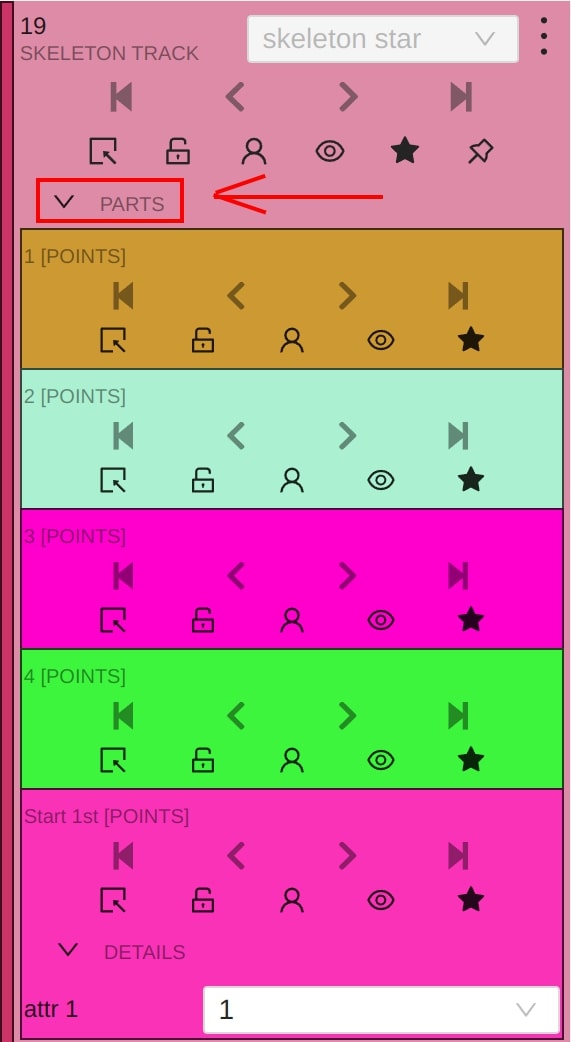

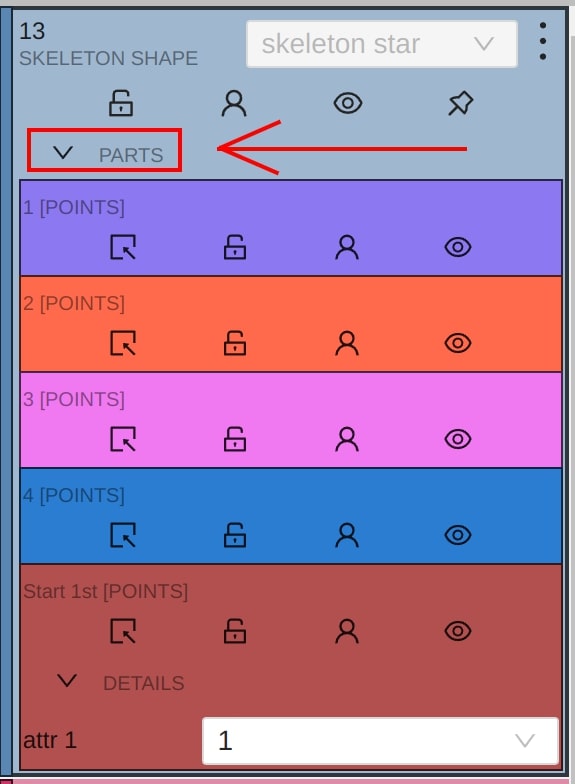

Editing skeletons on the sidebar

In CVAT, the sidebar offers an alternative method for setting up skeleton properties and attributes.

This approach is similar to that used for other object types supported by CVAT, but with a few specific alterations:

An additional collapsible section is provided for users to view a comprehensive list of skeleton parts.

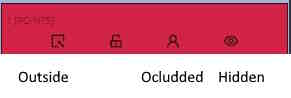

Skeleton points can have properties like Outside, Occluded, and Hidden.

Both Outside and Hidden make a skeleton point invisible.

-

Outside property is part of annotations. Use it when part of the object is out of frame borders.

-

Hidden makes a point hidden only for the annotator’s convenience, this property will not be saved between different sessions.

-

Occluded keeps the point visible on the frame and usually means that the point is still on a frame, just hidden behind another object.

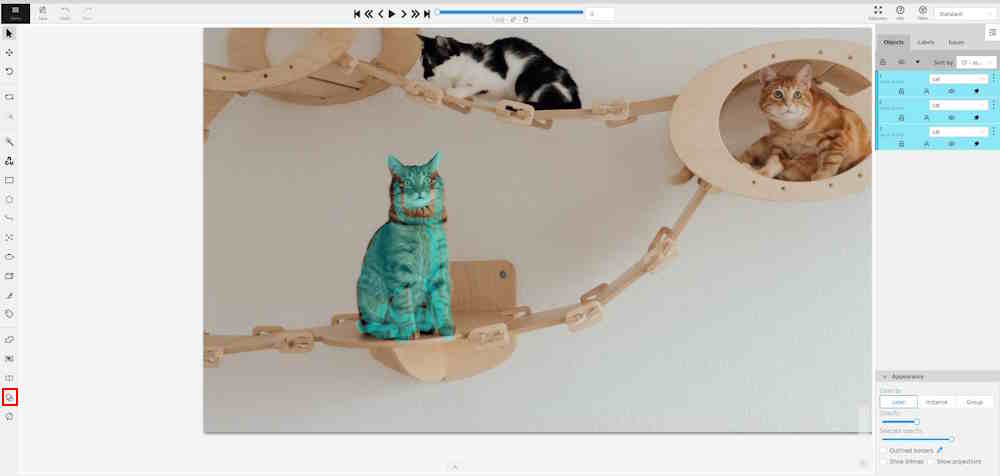

17 - Annotation with brush tool

With a brush tool, you can create masks for disjoint objects, that have multiple parts, such as a house hiding behind trees, a car behind a pedestrian, or a pillar behind a traffic sign. The brush tool has several modes, for example: erase pixels, change brush shapes, and polygon-to-mask mode.

Use brush tool for Semantic (Panoptic) and Instance Image Segmentation tasks.

For more information about segmentation masks in CVAT, see Creating masks.

See:

- Brush tool menu

- Annotation with brush

- Annotation with polygon-to-mask

- Remove underlying pixels

- AI Tools

- Import and export

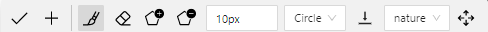

Brush tool menu

The brush tool menu appears on the top of the screen after you click Shape:

It has the following elements:

| Element | Description | |

|---|---|---|

| Save mask saves the created mask. The saved mask will appear on the object sidebar | ||

|

Save mask and continue adds a new mask to the object sidebar and allows you to draw a new one immediately. | |

| Brush adds new mask/ new regions to the previously added mask). | ||

|

Eraser removes part of the mask. | |

|

Polygon selection tool. Selection will become a mask. | |

|

Remove polygon selection subtracts part of the polygon selection. | |

|

Brush size in pixels. Note: Visible only when Brush or Eraser are selected. |

|

|

Brush shape with two options: circle and square. Note: Visible only when Brush or Eraser are selected. |

|

| Remove underlying pixels. When you are drawing or editing a mask with this tool, pixels on other masks that are located at the same positions as the pixels of the current mask are deleted. |

||

|

Hide mask. When drawing or editing a mask, you can enable this feature to temporarily hide the mask, allowing you to see the objects underneath more clearly. | |

|

Label that will be assigned to the newly created mask | |

|

Move. Click and hold to move the menu bar to the other place on the screen |

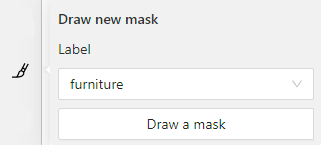

Annotation with brush

To annotate with brush, do the following:

-

From the controls sidebar, select Brush

.

. -

In the Draw new mask menu, select label for your mask, and click Shape.

The Brush tool will be selected by default.

tool will be selected by default.

-

With the brush, draw a mask on the object you want to label.

To erase selection, use Eraser

-

After you applied the mask, on the top menu bar click Save mask

to finish the process (or N on the keyboard). -

Added object will appear on the objects sidebar.

To add the next object, repeat steps 1 to 5. All added objects will be visible on the image and the objects sidebar.

To save the job with all added objects, on the top menu, click Save  .

.

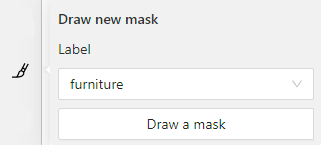

Annotation with polygon-to-mask

To annotate with polygon-to-mask, do the following:

-

From the controls sidebar, select Brush

.

. -

In the Draw new mask menu, select label for your mask, and click Shape.

-

In the brush tool menu, select Polygon

.

. -

With the Polygon

tool, draw a mask for the object you want to label.

tool, draw a mask for the object you want to label.

To correct selection, use Remove polygon selection .

. -

Use Save mask

(or N on the keyboard)

(or N on the keyboard)

to switch between add/remove polygon tools:

-

After you added the polygon selection, on the top menu bar click Save mask

to finish the process (or N on the keyboard). -

Click Save mask

again (or N on the keyboard).

again (or N on the keyboard).

The added object will appear on the objects sidebar.

To add the next object, repeat steps 1 to 5.

All added objects will be visible on the image and the objects sidebar.

To save the job with all added objects, on the top menu, click Save  .

.

Remove underlying pixels

Use Remove underlying pixels tool when you want to add a mask and simultaneously delete the pixels of

other masks that are located at the same positions. It is a highly useful feature to avoid meticulous drawing edges twice between two different objects.

![]()

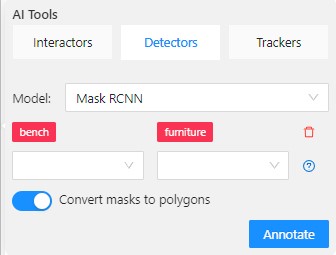

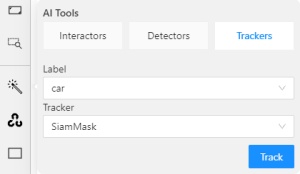

AI Tools

You can convert AI tool masks to polygons. To do this, use the following AI tool menu:

- Go to the Detectors tab.

- Switch toggle Masks to polygons to the right.

- Add source and destination labels from the drop-down lists.

- Click Annotate.

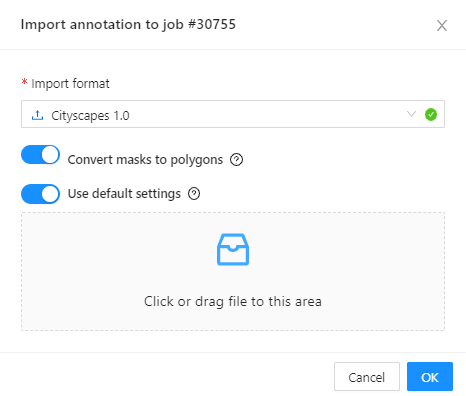

Import and export

For export, see Export dataset

Import follows the general import dataset procedure, with the additional option of converting masks to polygons.

Note: This option is available for formats that work with masks only.

To use it, when uploading the dataset, switch the Convert masks to polygon toggle to the right:

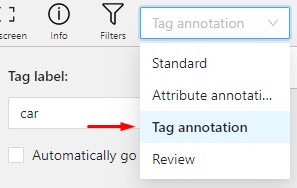

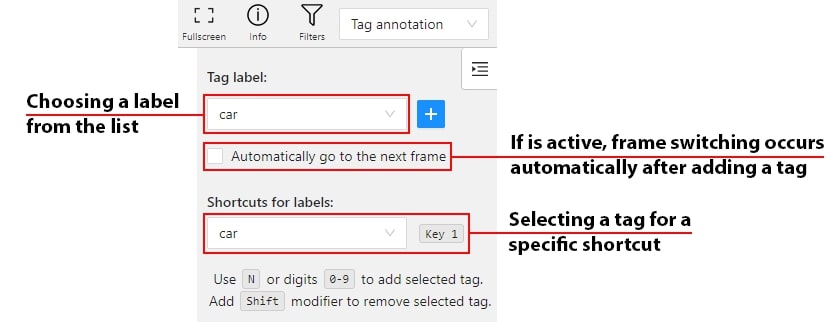

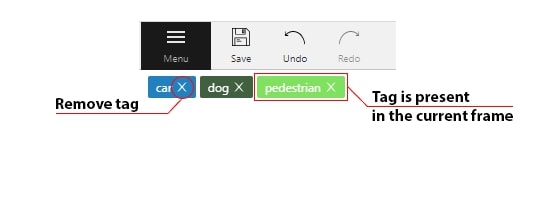

18 - Annotation with tags

It is used to annotate frames, tags are not displayed in the workspace.

Before you start, open the drop-down list in the top panel and select Tag annotation.

The objects sidebar will be replaced with a special panel for working with tags.

Here you can select a label for a tag and add it by clicking on the Plus button.

You can also customize hotkeys for each label.

If you need to use only one label for one frame, then enable the Automatically go to the next frame

checkbox, then after you add the tag the frame will automatically switch to the next.

Tags will be shown in the top left corner of the canvas. You can show/hide them in the settings.

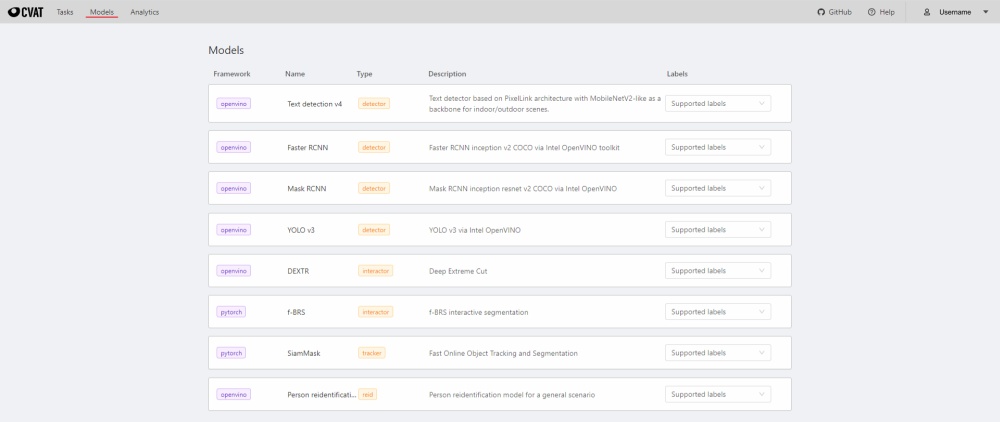

19 - Models

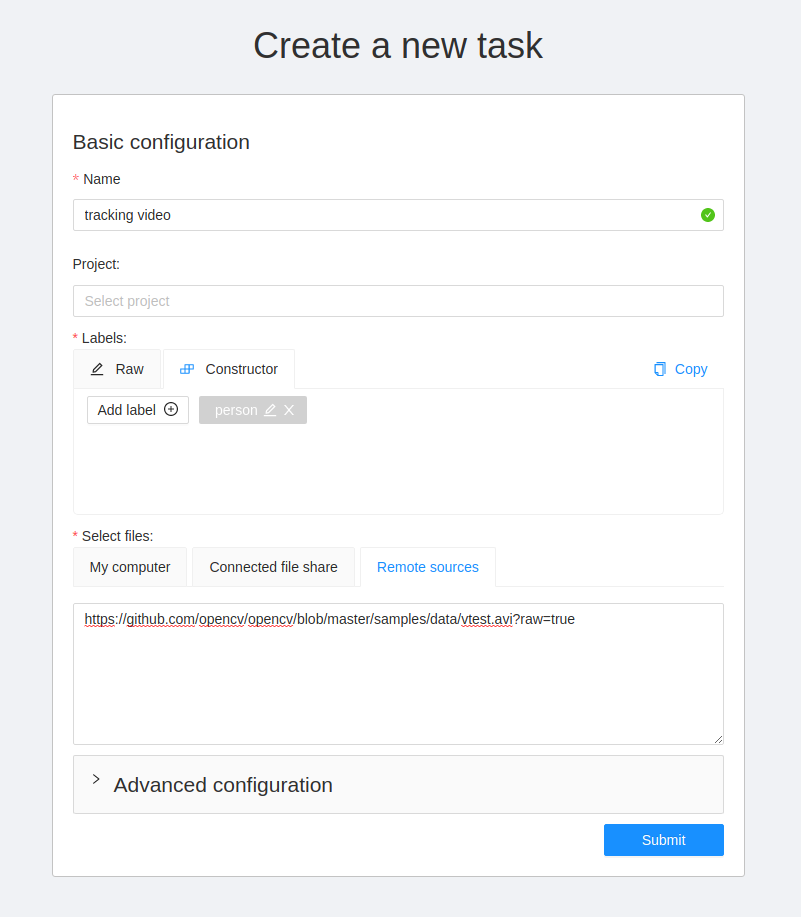

To deploy the models, you will need to install the necessary components using Semi-automatic and Automatic Annotation guide. To learn how to deploy the model, read Serverless tutorial.

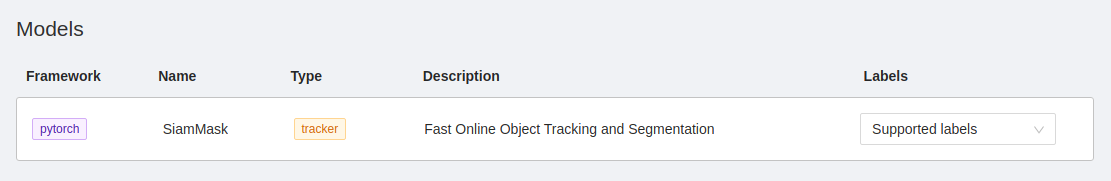

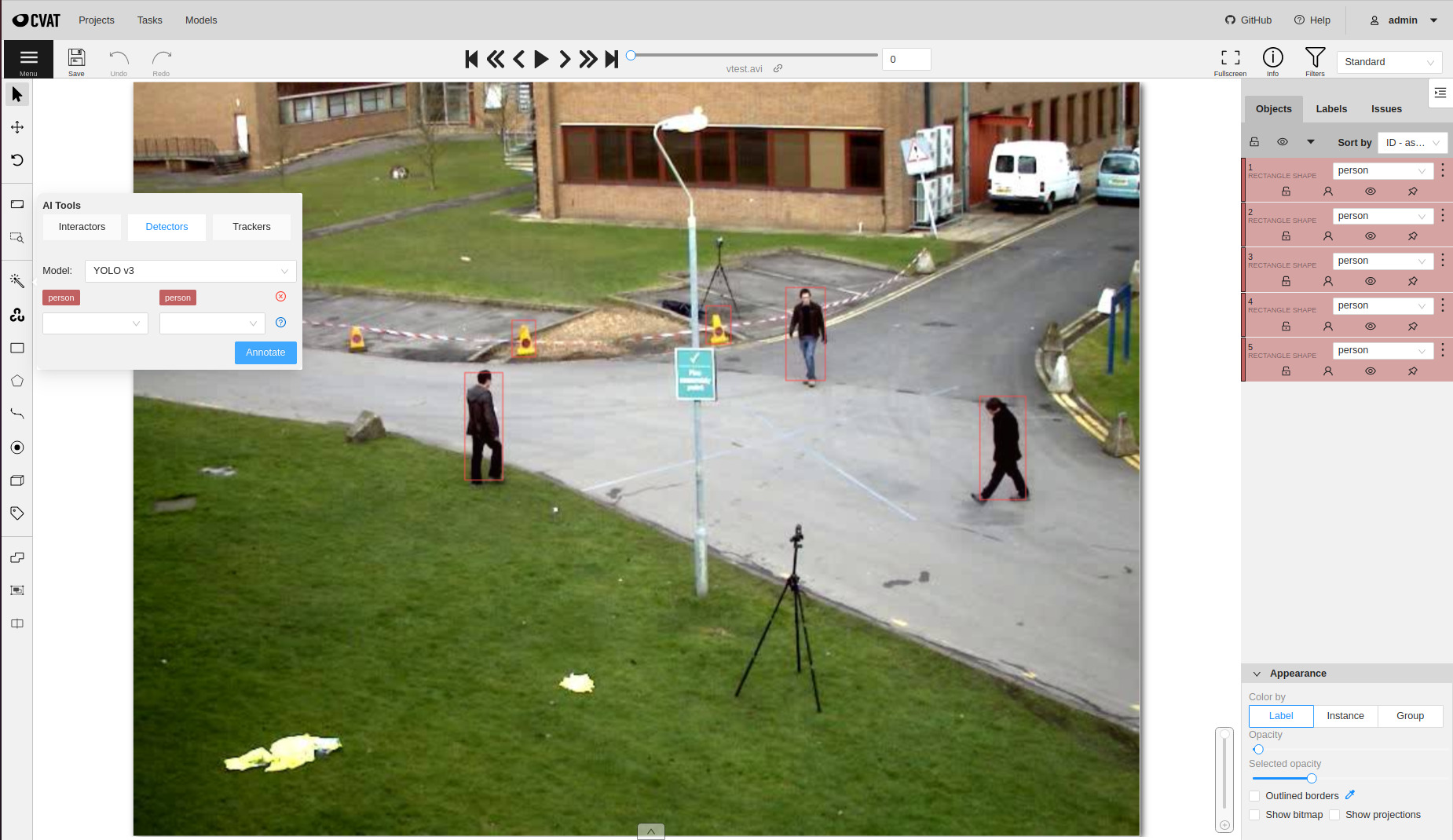

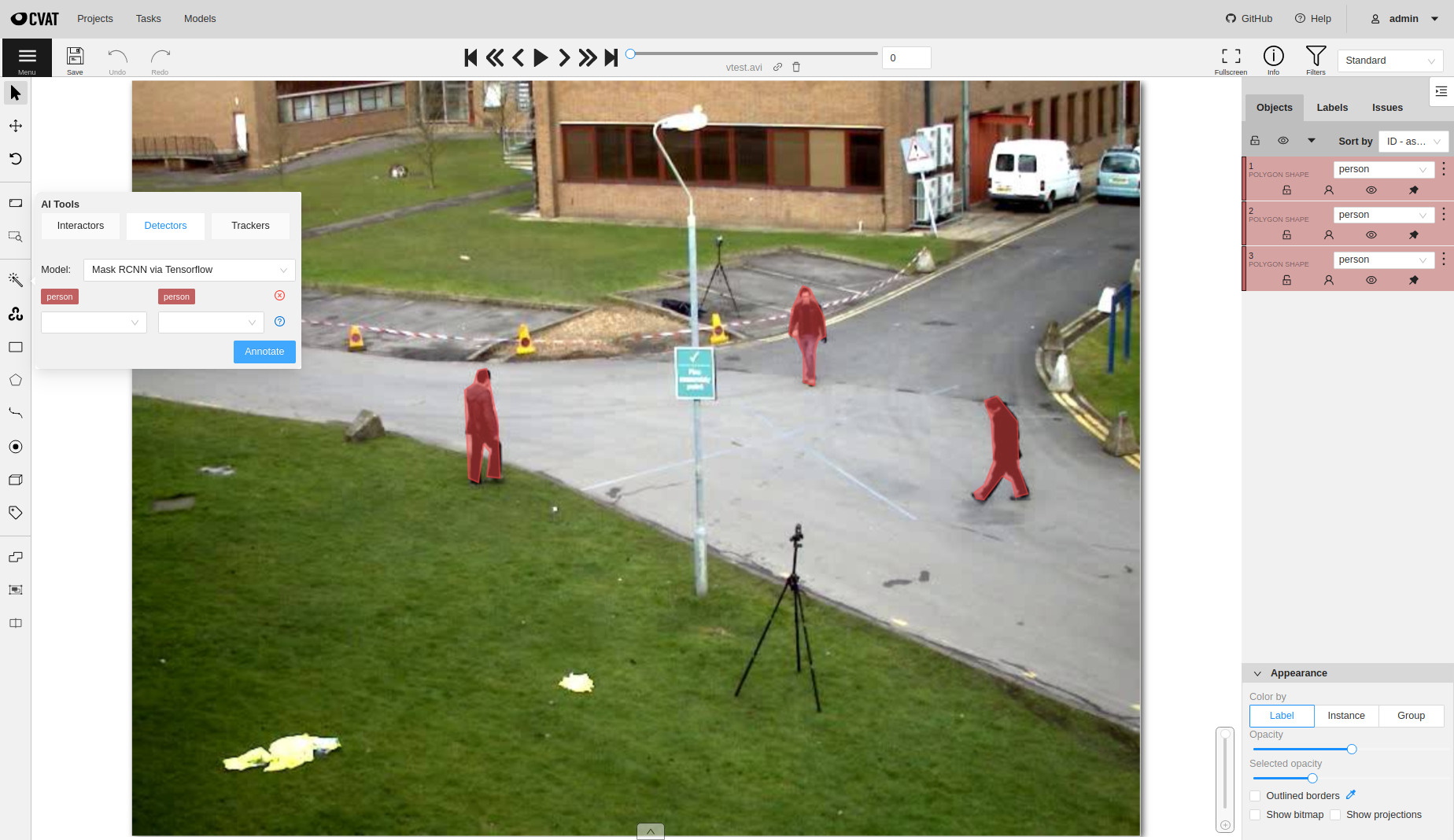

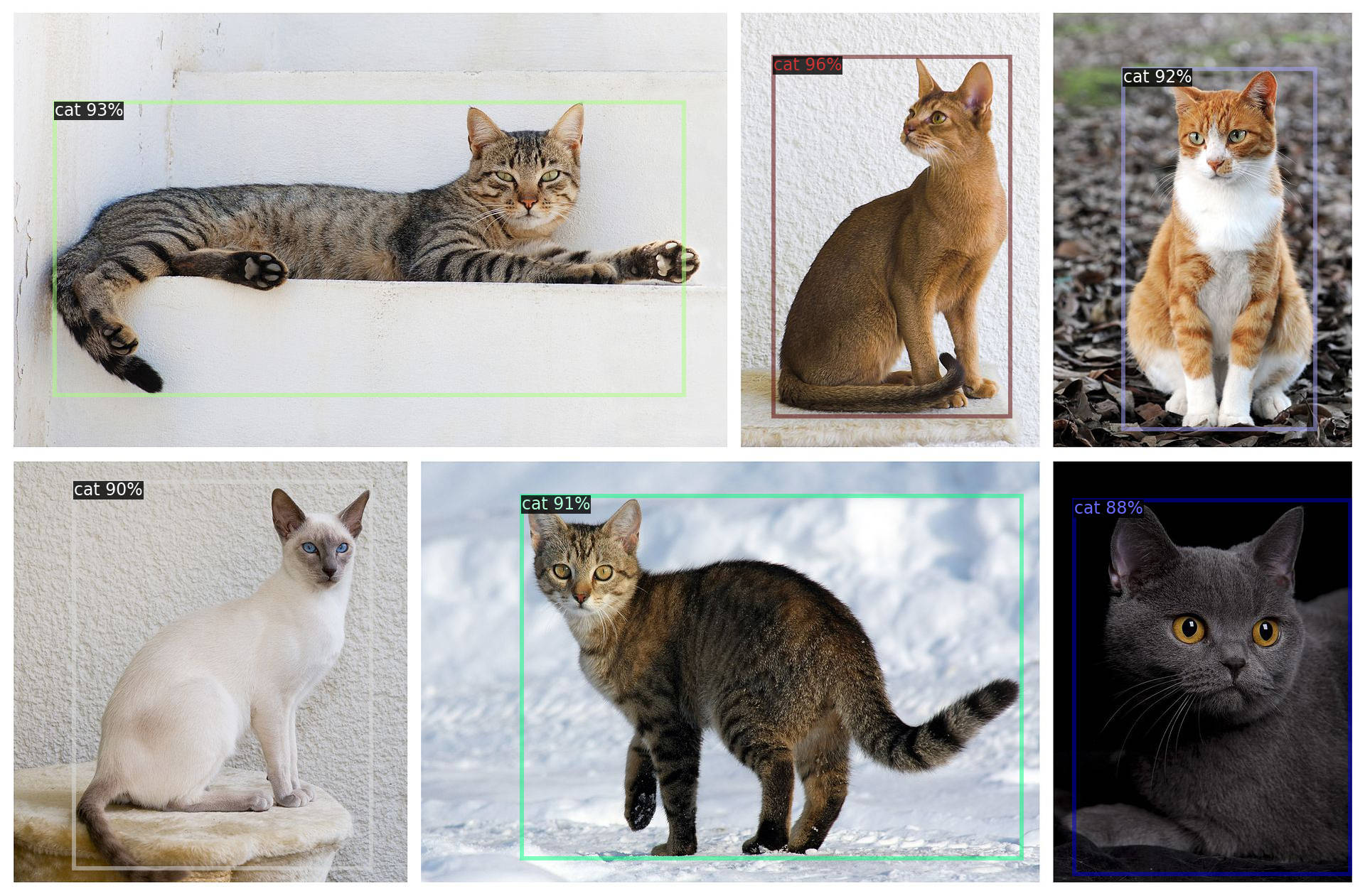

The Models page contains a list of deep learning (DL) models deployed for semi-automatic and automatic annotation. To open the Models page, click the Models button on the navigation bar. The list of models is presented in the form of a table. The parameters indicated for each model are the following:

Frameworkthe model is based on- model

Name - model

Type:detector- used for automatic annotation (available in detectors and automatic annotation)interactor- used for semi-automatic shape annotation (available in interactors)tracker- used for semi-automatic track annotation (available in trackers)reid- used to combine individual objects into a track (available in automatic annotation)

Description- brief description of the modelLabels- list of the supported labels (only for the models of thedetectorstype)

20 - CVAT Analytics and QA in Cloud

20.1 - Automated QA, Review & Honeypots

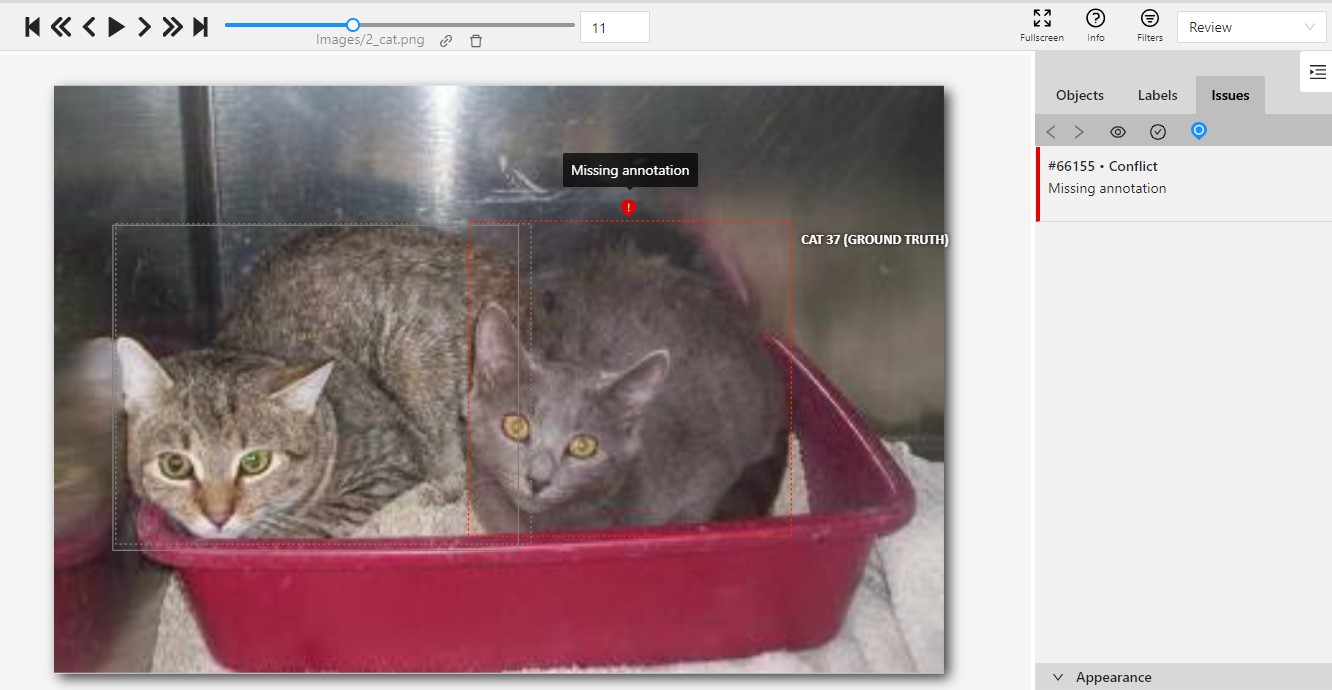

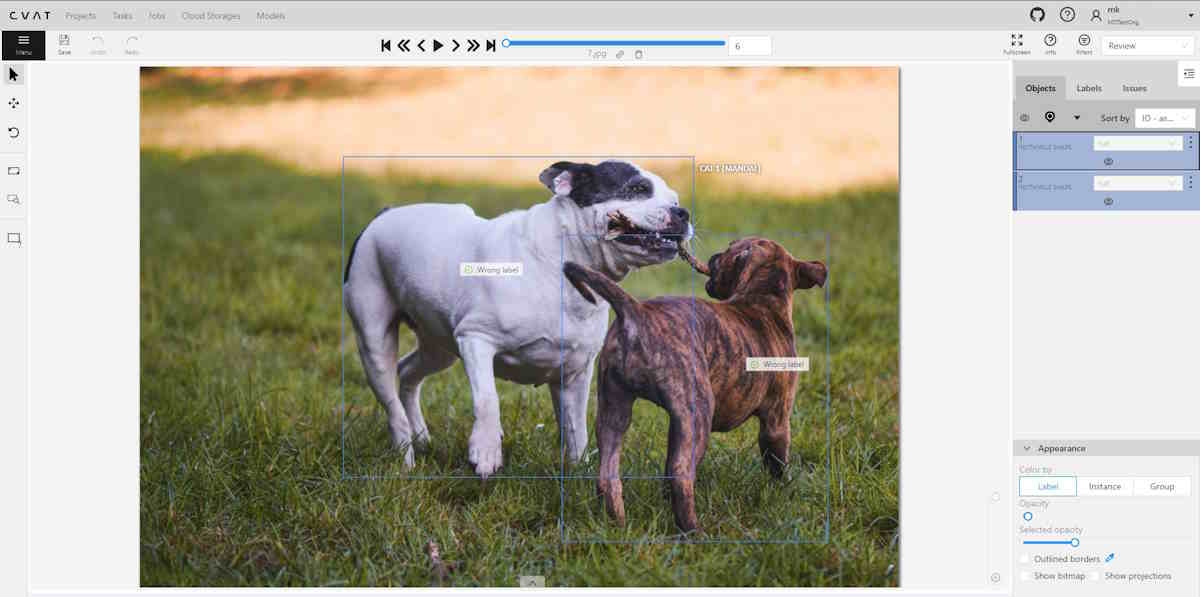

In CVAT, it’s possible to evaluate the quality of annotation through the creation of a validation subset of images. To estimate the task quality, CVAT compares all other jobs in the task against the established Ground truth job, and calculates annotation quality based on this comparison.

Note that quality estimation only supports 2d tasks. It supports all the annotation types except 2d cuboids.

Note that quality estimation is currently available for tasks and jobs. Quality estimation in projects is not supported.

CVAT has the following features for automated quality control of annotations:

- Validation set configuration for a task

- Job validation on job finish (“Immediate feedback”)

- Review mode for problems found

- Quality analytics

Basics

There are several approaches to quality estimation used in the industry. In CVAT, we can use a method known as Ground Truth or Honeypots. The method assumes there are Ground Truth annotations for images in the dataset. This method is statistical, which means that we can use only a small portion of the whole dataset to estimate quality on the full dataset, so we don’t need to annotate the whole dataset twice. Here we assume that the images in the dataset are similar (represent the same task).

We will call the validation portion of the whole dataset (or a task in CVAT) a validation set. In practice, it is typically expected that annotations in the validation set are carefully validated and curated. It means that they are more expensive - creating them might require expert annotators or just several iterations of annotation and validation. It means that it’s desirable to keep the validation set small enough. At the same time, it must be representative enough to provide reliable estimations. To achieve this, it’s advised that the validation set images are sampled randomly and independently from the full dataset. That is, for the quality assurance to function correctly, the validation set must have some portion of the task frames, and the frames must be chosen randomly.

Depending on the dataset size, data variance, and task complexity, 5-15% of the data is typically good enough for quality estimation, while keeping extra annotation overhead for the Ground Truth acceptable.

For example, in a typical task with 2000 frames, selecting just 5%, which is 100 extra frames to annotate, is enough to estimate the annotation quality. If the task contains only 30 frames, it’s advisable to select 8-10 frames, which is about 30%. It is more than 15%, but in the case of smaller datasets, we need more samples to estimate quality reliably, as data variance is higher.

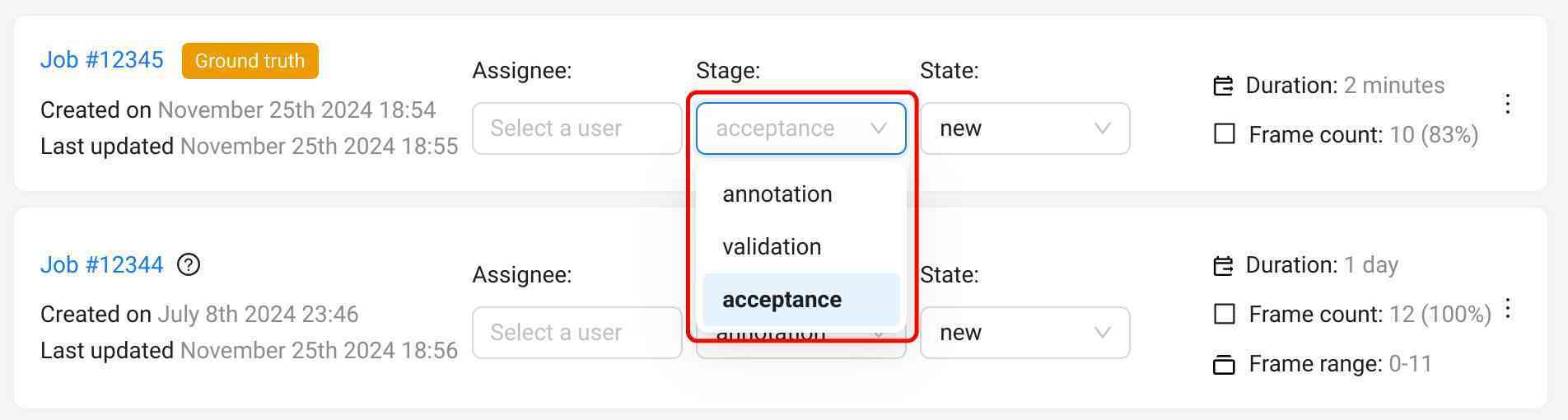

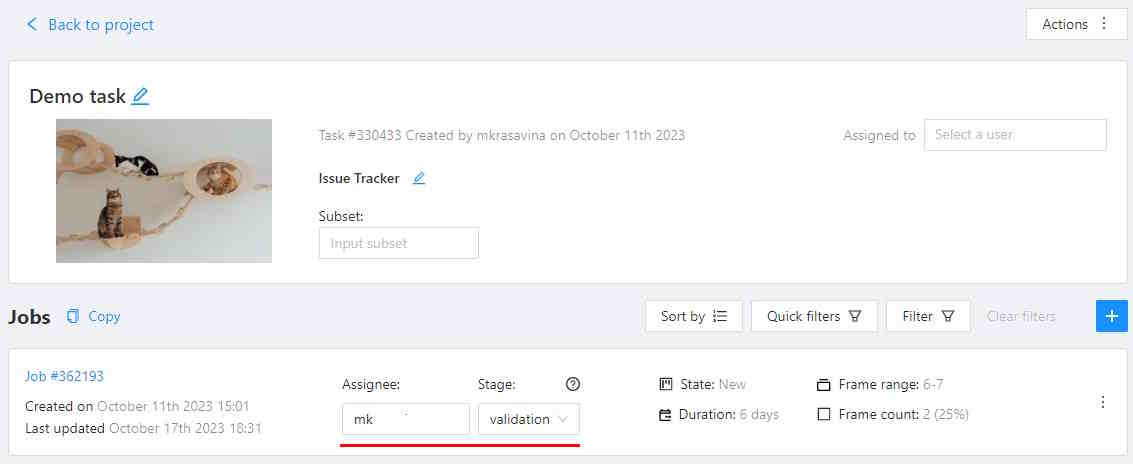

Ground truth jobs

A Ground Truth job (GT job) is a way to represent the validation set in a CVAT task. This job is similar to regular annotation jobs - you can edit the annotations manually, use auto-annotation features, and import annotations in this job. There can be no more than 1 Ground Truth job in a task.

To enable quality estimation in a task, you need to create a Ground truth job in the task,

annotate it, switch the job stage to acceptance, and set the job state to completed.

Once the Ground Truth job is configured, CVAT will start using this job for quality estimation.

Read more about Ground Truth management here.

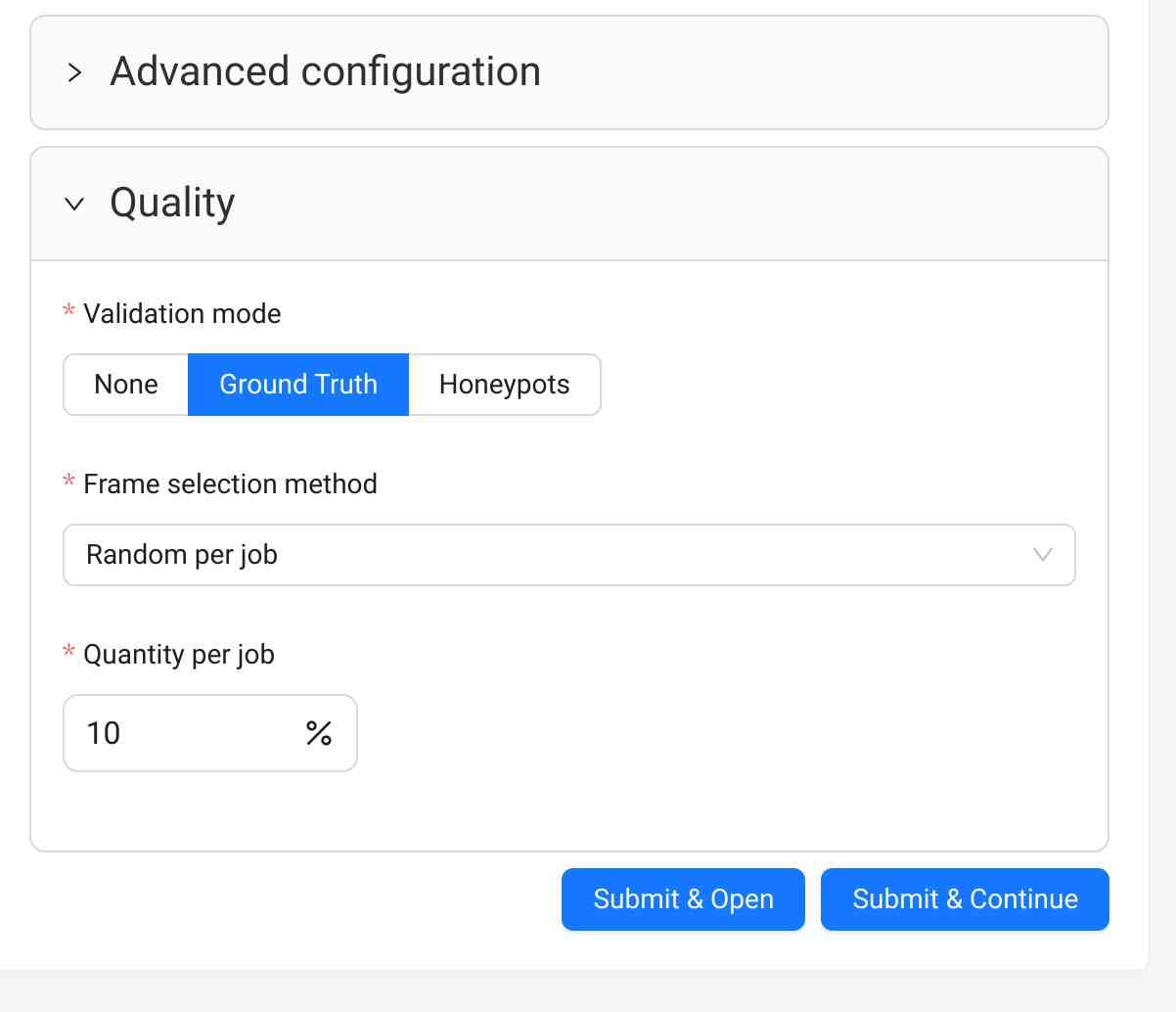

Configuring quality estimation

Quality estimation is configured on the Task level.

- Go to the task creation page

- Configure basic and advanced parameters according to your requirements, and attach a dataset to be annotated

- Scroll down to the Quality Control section below

- Select one of the validation modes available

- Create the task and open the task page

- Upload or create Ground Truth annotations in the Ground Truth job in the task

- Switch the Ground Truth job into the

acceptancestage andcompletedstate

For already existing tasks only the Ground Truth validation mode is available. If you want to use Honeypots for your task, you will need to recreate the task.

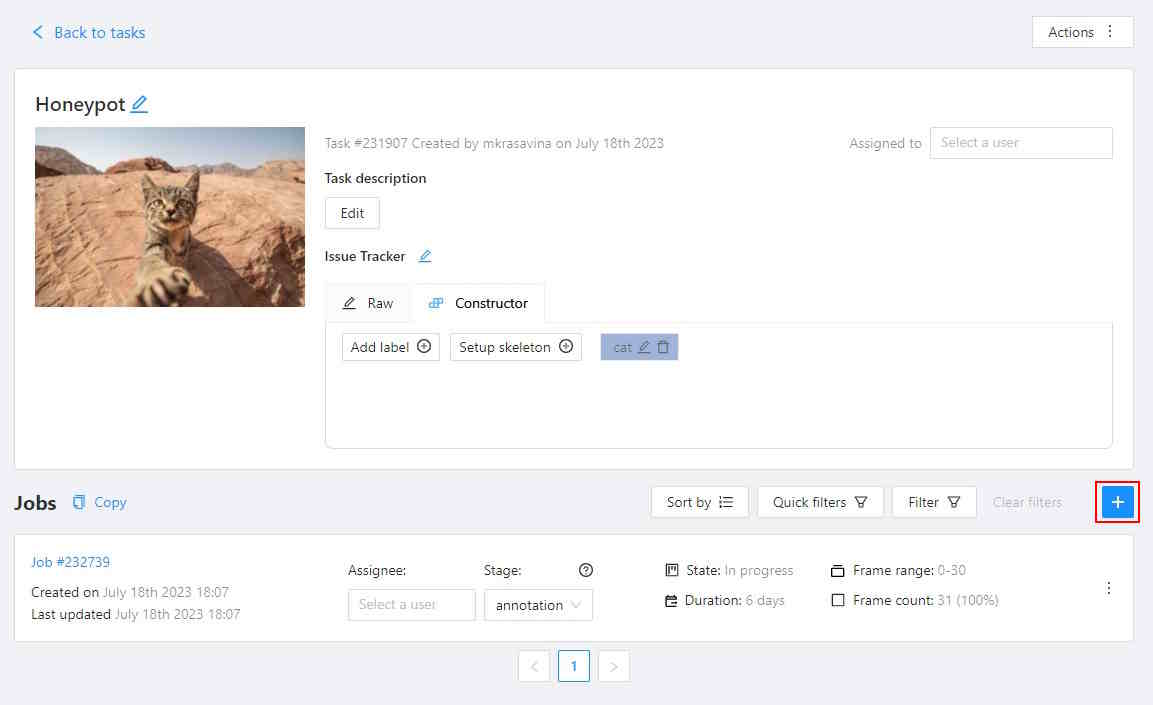

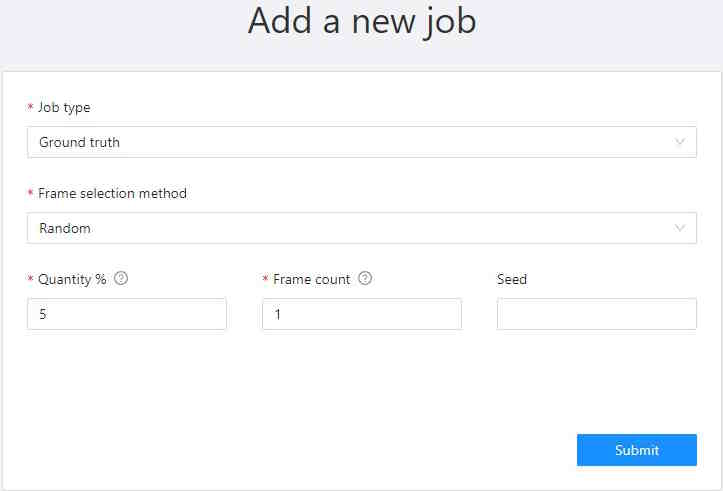

- Open the task page

- Click +.

- In the Add new job window, fill in the following fields:

- Job type: Use the default parameter Ground truth.

- Frame selection method: Use the default parameter Random.

- Quantity %: Set the desired percentage of frames for the Ground truth job.

Note that when you use Quantity %, the Frames field will be autofilled. - Frame count: Set the desired number of frames for the Ground truth job.

Note that when you use Frames, the Quantity % field will be autofilled. - Seed: (Optional) If you need to make the random selection reproducible, specify this number.

It can be any integer number, the same value will yield the same random selection (given that the

frame number is unchanged).

Note that if you want to use a custom frame sequence, you can do this using the server API instead, see Job API create().

- Click Submit.

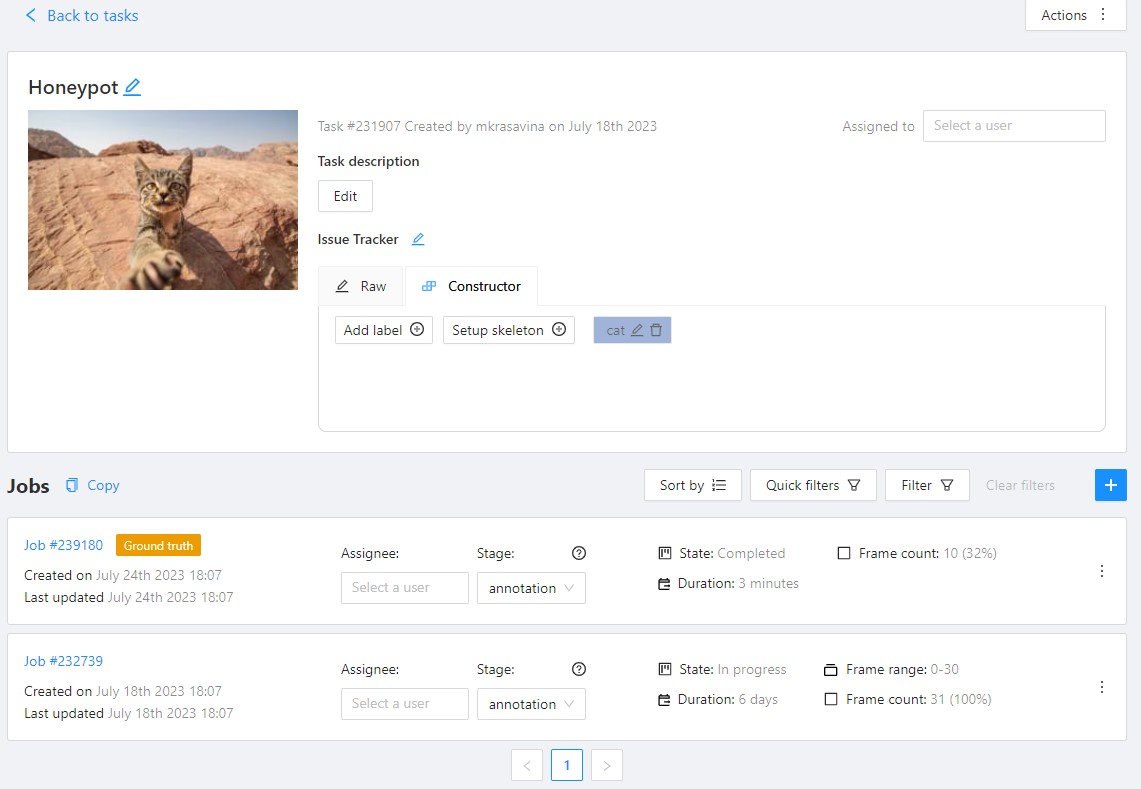

The Ground truth job will appear in the jobs list.

- Annotate frames and save your work or upload annotations.

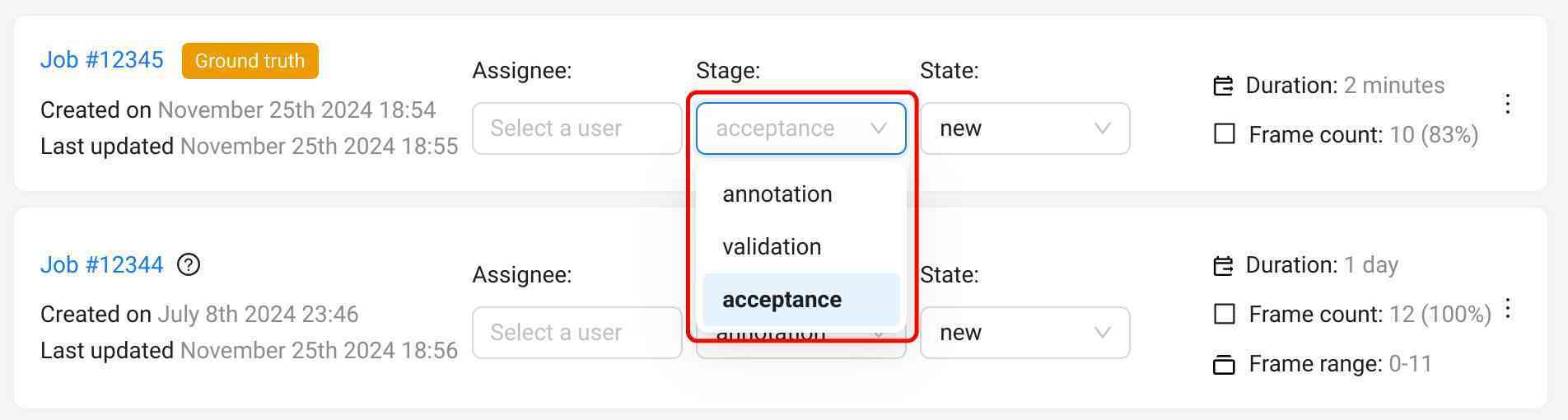

- Switch the Ground Truth job into the

acceptancestage andcompletedstate

A Ground truth job is considered configured if it is at the acceptance stage and in the completed state.

A configured Ground Truth job is required for all quality computations in CVAT.

Validation modes

Currently, there are 2 validation modes available for tasks: Ground Truth and Honeypots. These names are often used interchangeably, but in CVAT they have some differences. Both modes rely on the use of Ground Truth annotations in a task, stored in a Ground Truth job, where they can be managed.

Ground Truth

In this mode some of the task frames are selected into the validation set, represented as a separate Ground Truth job. The regular annotation jobs in the task are not affected in any way.

Ground Truth jobs can be created at the task creation automatically or manually at any moment later. They can also be removed manually at any moment. This validation mode is available for any tasks and annotations.

This is a flexible mode that can be enabled or disabled at any moment without any disruptions to the annotation process.

Frame selection

This validation mode can use several frame selection methods.

Random

This is a simple method that selects frames into the validation set randomly, representing the basic approach, described above.

Parameters:

- frame count - the number or percent of the task frames to be used for validation.

Can be specified as an absolute number in the

Frame countfield or a percent in theQuantityfield. If there are both fields on the page, they are linked, which means changing one of them will adjust the other one automatically. - random seed - a number to be used to initialize the random number generator. Can be useful if you want to create a reproducible sequence of frames.

Random per job

This method selects frames into the validation set randomly from each annotation job in the task.

It solves one of the issues with the simple Random method that some of the jobs can get no validation frames, which makes it impossible to estimate quality in such jobs. Note that using this method can result in increased total size of the validation set.

Parameters:

- frame count per job - the percent of the job frames to be used for validation.

This method uses segment size of the task to select the same number of validation frames

in each job, if possible. Can be specified as an absolute number in the

Frame countfield or a percent in theQuantity per jobfield. If there are both fields on the page, they are linked, which means changing one of them will adjust the other one automatically. - random seed - a number to be used to initialize the random number generator. Can be useful if you want to create a reproducible sequence of frames.

Honeypots

In this mode some random frames of the task are selected into the validation set. Then, validation frames are randomly mixed into regular annotation jobs. This mode can also be called “Ground Truth pool”, reflecting the way validation frames are used. This mode can only be used at task creation and cannot be changed later.

The mode has some limitations on the compatible tasks:

- It’s not possible to use it for an already existing task, the task has to be recreated.

- This mode assumes random frame ordering, so it is only available for image annotation tasks and not for ordered sequences like videos.

- Tracks are not supported in such tasks.

The validation set can be managed after the task is created - annotations can be edited, frames can be excluded and restored, and honeypot frames in the regular jobs can be changed. However, it’s not possible to select new validation frames after the task is created. The Ground truth job created for this validation mode cannot be deleted.

Parameters:

- frame count per job (%) - the percent of job frames (segment size) to be added into each

annotation job from the validation set. Can be specified in the

Overhead per jobfield. - total frame count (%) - the percent of the task frames to be included into the validation set.

This value must result in at least

frame count per job*segment sizeframes. Can be specified in theTotal honeypotsfield.

Mode summary

Here is a brief comparison of the validation modes:

| Aspect | Ground Truth | Honeypots |

|---|---|---|

| When can be used | any time | at task creation only |

| Frame management options | exclude, restore | exclude, restore, change honeypots in jobs |

| Ground Truth job management options | create, delete | create |

| Task frame requirements | - | random ordering only |

| Annotations | any | tracks are not supported |

| Minimum validation frames count | - manual and random_uniform - any (but some jobs can get no validation frames)- random_per_job - jobs count * GT frames per job |

not less than honeypots count per job |

| Task annotation import | GT annotations and regular annotations do not affect each other | Annotations are imported both into the GT job and regular jobs. Annotations for validation frames are copied into corresponding honeypot frames. |

| Task annotation export | GT annotations and regular annotations do not affect each other | Annotations for non-validation frames are exported as is. Annotations for validation frames are taken from the GT frames. Honeypot frames are skipped. |

Choosing the right mode

Here are some examples on how to choose between these options. The general advice is to use Ground Truth for better flexibility, but keep in mind that it can require more resources for validation set annotation. Honeypots, on the other hand, can be beneficial if you want to minimize the number of validation images required, but the downside here is that there are some limitations on where this mode can be used.

Example: a video annotation with tracks. In this case there is only 1 option - the Ground Truth mode, so just use it.

Example: an image dataset annotation, image order is not important. Here you can use both options. You can choose Ground Truth for better flexibility in validation. This way, you will have the full control of validation frames in the task, annotation options won’t be limited, and the regular jobs will not be affected in any way. However, if you have a limited budget for the validation (for instance, you have only a small number of validation frames) or you want to allow more scalability (with this approach the number of validation frames doesn’t depend on the number of regular annotation jobs), it makes sense to consider using Honeypots instead.

Quality management

If a task has a validation configured, there are several options to manage validation set images. With any of the validation modes, there will be a special Ground Truth (GT) job in the task.

Validation set management

Validation frames can be managed on the task Quality Management page. Here it’s possible to check the number of validation frames, current validation mode and review the frame details. For each frame you can see the number of uses in the task. When in the Ground Truth mode, this number will be 1 for all frames. With Honeypots, these numbers can be 0, 1 or more.

Frame changes

In both validation modes it’s possible to exclude some of the validation frames from being used for validation. This can be useful if you find that some of the validation frames are “bad”, extra, or if they have incorrect annotations, which you don’t want to fix. Once a frame is marked “excluded”, it will not be used for validation. There is also an option to restore a previously excluded frame if you decide so.

There is an option to exclude or restore frames in bulk mode. To use it, select the frames needed using checkboxes, and click one of the buttons next to the table header.

Ground Truth job management

In the Ground Truth validation mode, there will be an option to remove the Ground Truth job from the task. It can be useful if you want to change validation set frames completely, add more frames, or remove some of the frames for any reason. This is available in the job Actions menu.

In the Honeypots mode, it’s not possible to add or remove the GT job, so it’s not possible to add more validation frames.

Create

A Ground Truth job can be added manually in a task without a selected validation mode or in a task with the Ground Truth validation mode, after the existing Ground Truth job is deleted manually.

Delete

To delete the Ground Truth job, do the following:

- Open the task and find the Ground Truth job in the jobs list.

- Click on three dots to open the menu.

- From the menu, select Delete.

Note: The Ground truth job in the “Honeypots” task validation mode cannot be deleted.

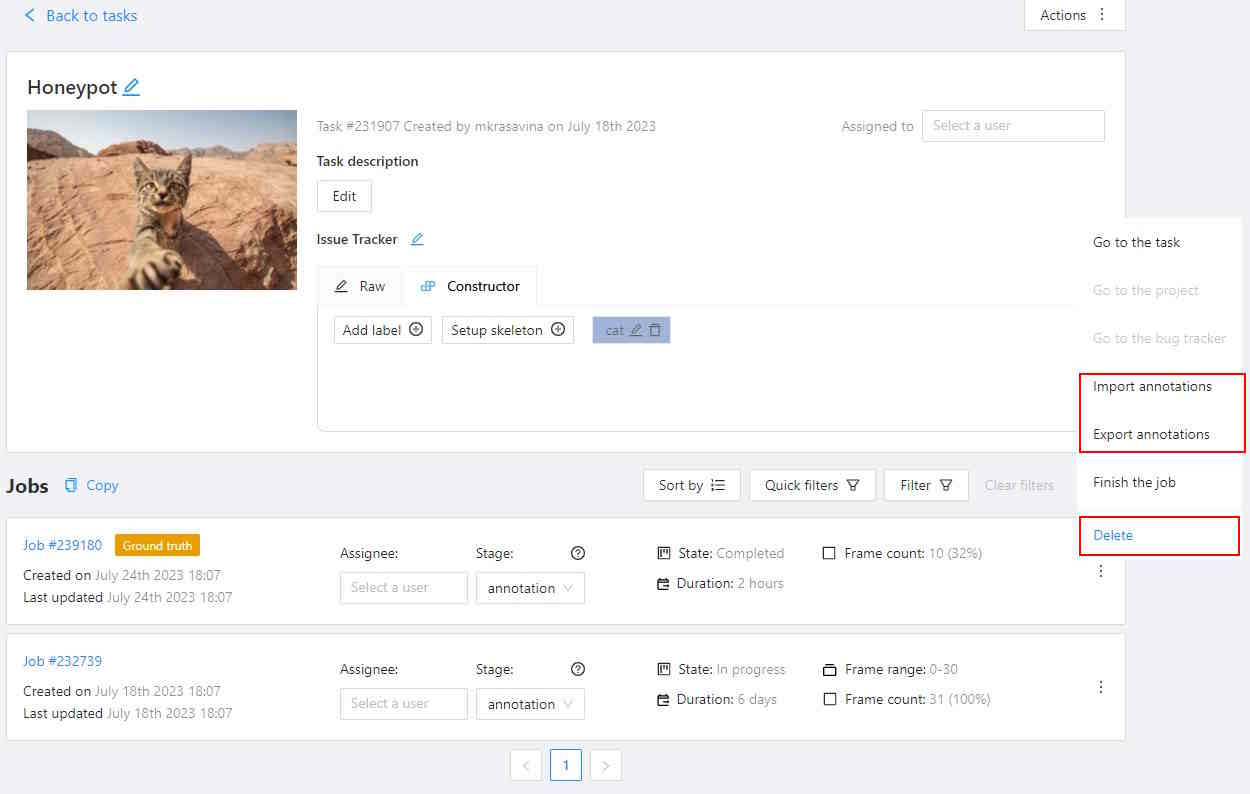

Import annotations

If you want to import annotations into the Ground truth job, do the following:

- Open the task and find the Ground truth job in the jobs list.

- Click on three dots to open the menu.

- From the menu, select Import annotations.

- Select import format and select file.

- Click OK.

Note that if there are imported annotations for the frames that exist in the task, but are not included in the Ground truth job, they will be ignored. This way, you don’t need to worry about “cleaning up” your Ground truth annotations for the whole dataset before importing them. Importing annotations for the frames that are not known in the task still raises errors.

Export annotations

To export annotations from the Ground Truth job, do the following:

- Open the task and find a job in the jobs list.

- Click on three dots to open the menu.

- From the menu, select Export annotations.

Annotation management

Annotations for validation frames can be displayed and edited in a special Ground Truth job in the task. You can edit the annotations manually, use auto-annotation features, import and export annotations in this job.

In the Ground Truth task validation mode, annotations of the ground Truth job do not affect other jobs in any way. The Ground Truth job is just a separate job, which can only be changed directly. Annotations from Ground truth jobs are not included in the dataset export, they also cannot be imported during task annotations import or with automatic annotation for the task.

In the Honeypots task validation mode, the annotations of the GT job also do not affect other jobs in any way. However, import and export of task annotations works differently. When importing task annotations, annotations for validation frames will be copied both into GT job frames and into corresponding honeypot frames in annotation jobs. When exporting task annotations, honeypot frames in annotation jobs will be ignored, and validation frames in the resulting dataset will get annotations from the GT job.

Note that it means that exporting from a task with honeypots and importing the results back will result in changed annotations on the honeypot frames. If you want to backup annotations, use a task backup or export job annotations instead.

Import and export of Ground Truth job annotations works the same way in both modes.

Ground Truth jobs are included in task backups, so can be saved and restored this way.

Import, Export, and Delete options are available from the Ground Truth job Actions menu. Read more.

Annotation quality settings

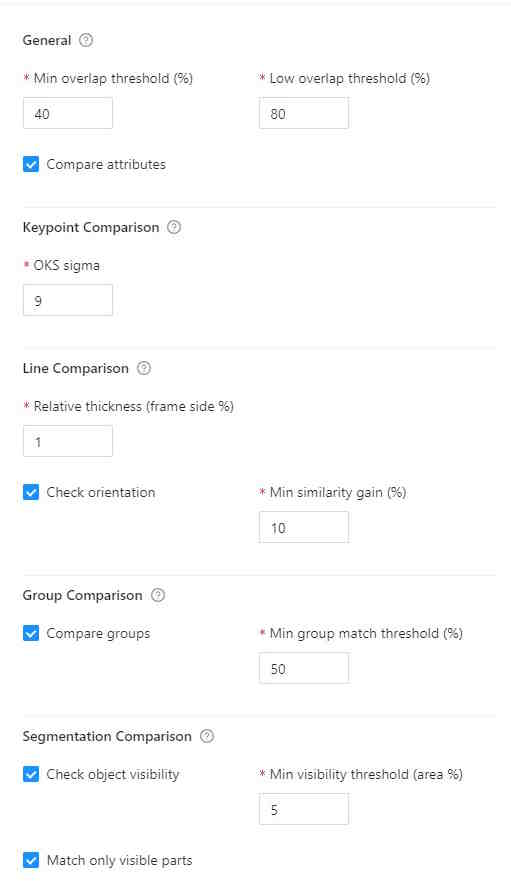

If you need to tweak some aspects of comparisons, you can do this from the Annotation Quality Settings menu.

You can configure what overlap should be considered low or how annotations must be compared.

The updated settings will take effect on the next quality update.

To open Annotation Quality Settings, find Quality report and on the right side of it, click on three dots.

The following window will open. Hover over the ? marks to understand what each field represents.

Annotation quality settings have the following parameters:

| Parameter | Description |

|---|---|

| General reporting | |

| Target metric | The primary metric used for quality estimation. It affects which metric is displayed in the UI and used for overall quality estimation. |

| Immediate feedback | |

|---|---|

| Max validations per job | Configures maximum job validations per assignment for the Immediate feedback feature. |

| Target metric threshold | Defines the minimal quality requirements in terms of the selected target metric. Serves as an acceptance threshold for the Immediate feedback feature. |

| Shape matching | |

|---|---|

| Min overlap threshold | Min overlap threshold used for the distinction between matched and unmatched shapes. Used to match all types of annotations. It corresponds to the Intersection over union (IoU) for spatial annotations, such as bounding boxes and masks. |

| Low overlap threshold | Low overlap threshold used for the distinction between strong and weak matches. Only affects Low overlap warnings. It’s supposed that Min similarity threshold <= Low overlap threshold. |

| Match empty frames | Consider frames matched if there are no annotations both on GT and regular job frames |

| Point and Skeleton matching | |

|---|---|

| OKS Sigma | Relative size of points. The percent of the bbox side, used as the radius of the circle around the GT point, where the checked point is expected to be. For boxes with different width and height, the “side” is computed as a geometric mean of the width and height. |

| Point matching | |

|---|---|

| Point size base | When comparing point annotations (including both separate points and point groups), the OKS sigma parameter defines a matching area for each GT point based on the object size. The point size base parameter allows configuring how to determine the object size. If set to image_size, the image size is used. Useful if each point annotation represents a separate object or boxes grouped with points do not represent object boundaries. If set to group_bbox_size, the object size is based on the point group bounding box size. Useful if each point group represents an object or there is a bbox grouped with points, representing the object size. |

| Polyline matching | |

|---|---|

| Relative thickness | Thickness of polylines, relative to the (image area) ^ 0.5. The distance to the boundary around the GT line inside of which the checked line points should be. |

| Check orientation | Indicates that polylines have direction. Used to produce Mismatching direction warnings |

| Min similarity gain (%) | The minimal gain in IoU between the given and reversed line directions to consider the line inverted. Only useful with the Check orientation parameter. |

| Group matching | |

|---|---|

| Compare groups | Enables or disables annotation group checks. This check will produce Group mismatch warnings for grouped annotations, if the annotation groups do not match with the specified threshold. Each annotation within a group is expected to match with a corresponding annotation in a GT group. |

| Min group match threshold | Minimal IoU for groups to be considered matching, used when Compare groups is enabled. |

| Mask and polygon matching | |

|---|---|

| Check object visibility | Check for partially-covered annotations. Masks and polygons will be compared to each other. |

| Min visibility threshold | Minimal visible area percent of the mask annotations (polygons, masks). Used for reporting Covered annotation warnings, useful with the Check object visibility option. |

| Match only visible parts | Use only the visible part of the masks and polygons in comparisons. |

Comparisons

Tags

The equality is used for matching.

Shapes

A pair of shapes is considered matching, if both their shapes and labels match. For each shape, spatial parameters are matched first, then labels are matched.

Each shape type can have their own spatial matching details. Specifically:

-

bounding box - IoU (including rotation). For example, for a pair of bounding boxes it can be visualized this way:

IoU = intersection area / union area. The green part is the intersection, and green, yellow and red ones together are the union. -

polygons, masks - IoU. Polygons and masks are considered interchangeable, which means a mask can be matched with a polygon and vice versa. Polygons and masks in groups are merged into a single object first. If the Match only visible parts option is enabled, objects will be cut to only the visible (non-covered) parts only, which is determined by the shape z order.

-

skeletons - The OKS metric from the COCO dataset is used. Briefly, each skeleton point gets a circular area around, determined by the object size (bounding box side) and relative point size (sigma) values, where this point can be matched with the specified probability. If a bounding box is grouped with the skeleton, it is used for object size computation, otherwise a bounding box of visible points of the skeleton is used.

For example, consider a skeleton with 6 points and a square bounding box attached:

In this example, the Sigma parameter is

0.05(5%) of the bbox side. Areas shown in the green color cover ~68.2% (1 sigma) of the points, corresponding to each GT point. A point on the boundary of such an area will have ~88% of probability to be correct. The blue-colored zone contains ~95% (2 sigma) of the correct points for the corresponding GT point. A point on the boundary of such an area will have ~60% of probability to be correct. These probabilities are then averaged over the visible points of the skeleton, and the resulting values are compared against the Min similarity threshold to determine whether the skeletons are matching. Sigma corresponds to one from the normal distribution. -

points - The OKS metric is used for each point group annotation. Same as for skeletons, OKS Sigma determines relative point sizes. The Point size base setting allows configuring whether points in point groups should use the group bounding box or the image space. Using image space for object size can be useful if you want to treat each point as a separate annotation.

-

polylines - A pair of lines is considered matching if all the points of one line lie within a “hull” of the other one. The “hull” is determined as the area around the polyline, such as if the line had some “thickness”. For example, the black polyline can have a hull shown in the green color:

The line thickness can be configured via the Relative thickness setting. The value is relative to the image side and determines a half of the hull width.

-

ellipses - IoU, described in more detail above.

Note: 2d cuboids are not supported

Tracks

Tracks are split into separate shapes and compared on the per-frame basis with other tracks and shapes.

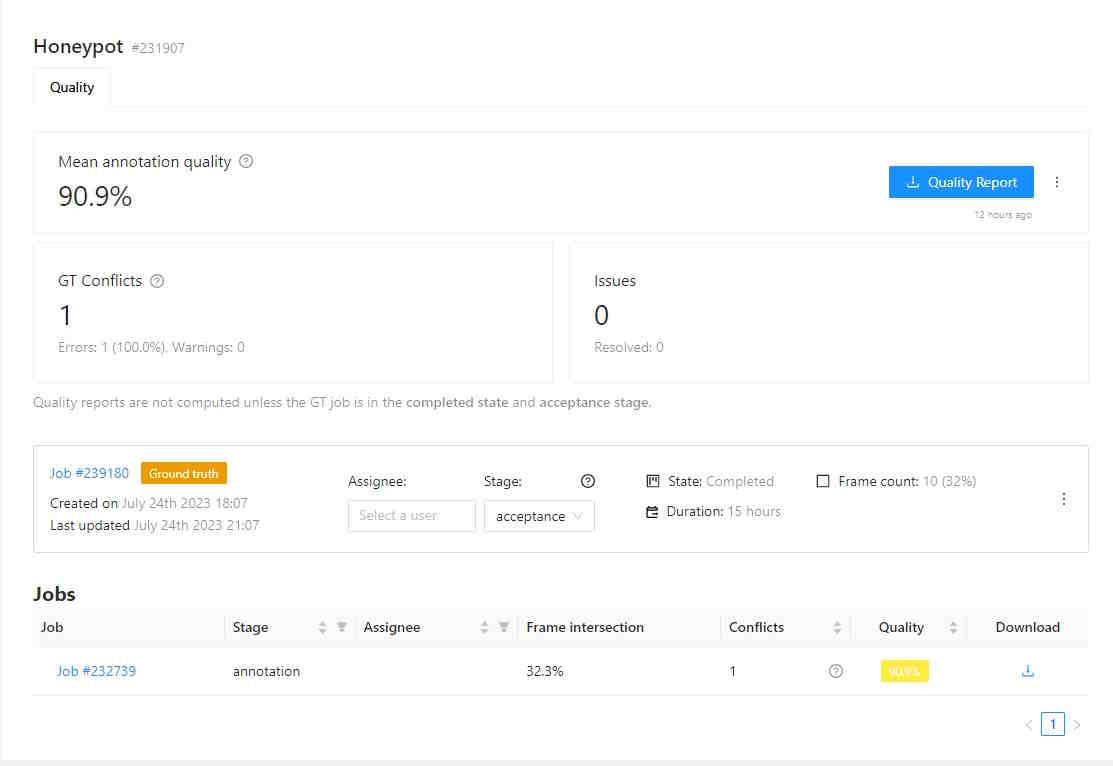

Quality Analytics

Note: quality analytics is a paid feature. Please check how to get access to this functionality in the Paid features section of the site.

Once the quality estimation is enabled in a task and the Ground Truth job is configured, quality analytics becomes available for the task and its jobs.

When you open the Quality Analytics page, it displays quality metrics from the most recent quality estimation. If it’s your first time accessing the page, no quality report will be available yet. The date of the last computation is shown next to the report download button.

If you want to request updating of quality metrics in a task (e.g. after the settings were changed), you can do this by pressing the Refresh button on the task Quality Management > Analytics page.

Note that the process of quality calculation may take up to several hours, depending on the amount of data and labeled objects, and is not updated immediately after task updates.

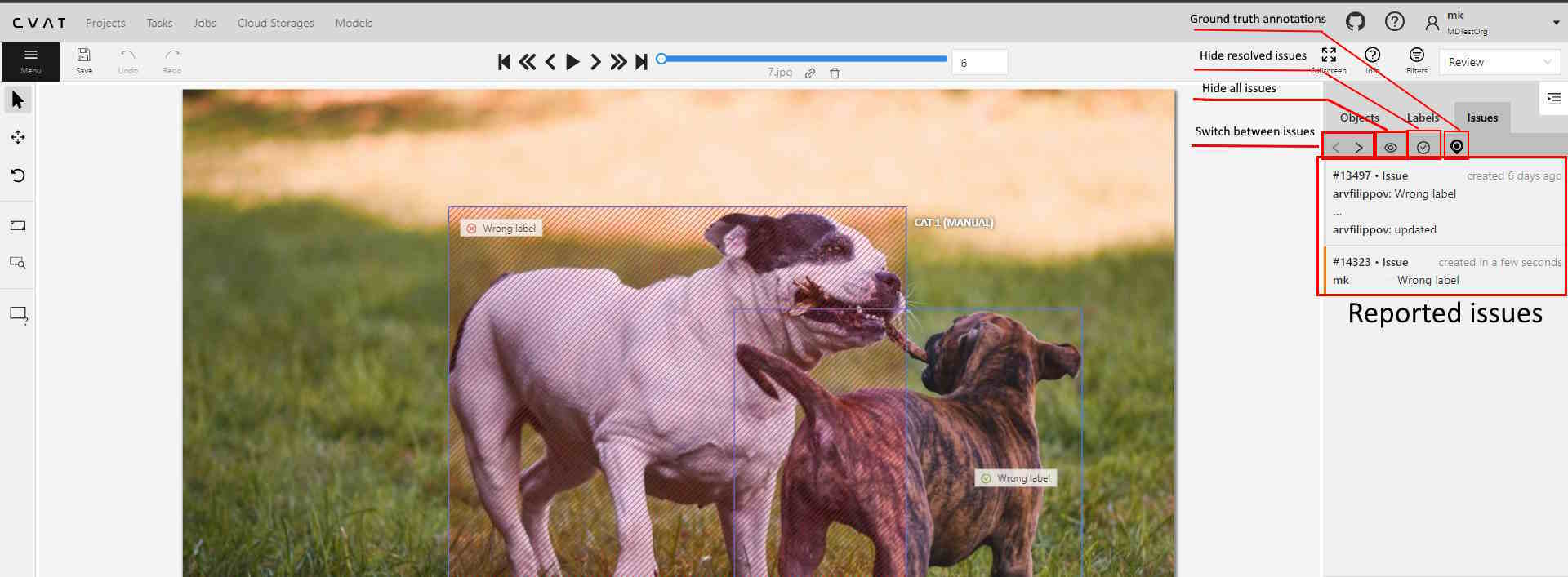

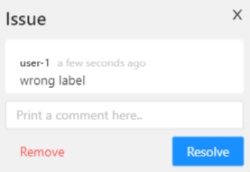

Once quality metrics are computed, they are available for detailed review on this page. Conflicts can be reviewed in the Review mode of jobs. A job must have at least 1 validation frame (shown in the Frame intersection column) to be included in quality computation.

Analytics page contents

The Analytics page has the following elements:

| Field | Description |

|---|---|

| Mean annotation quality | Displays the average quality of annotations, which includes: counts of the accurate annotations, total task annotations, ground truth annotations, accuracy, precision, and recall. The currently selected Target metric is displayed as the primary score. |

| GT Conflicts | Conflicts identified during quality assessment, including extra or missing annotations. Mouse over the ? icon for a detailed conflict report on your dataset. |

| Issues | Number of opened issues. If no issues were reported, 0 will be shown. |

| Quality report | Quality report in JSON format. |

| Ground truth job data | Information about ground truth job, including date, time, and number of issues. |

| List of jobs | List of all the jobs in the task |

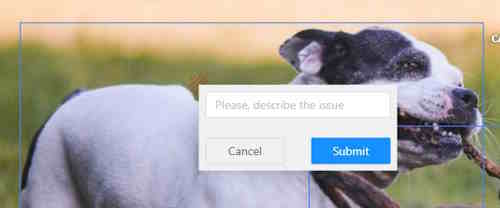

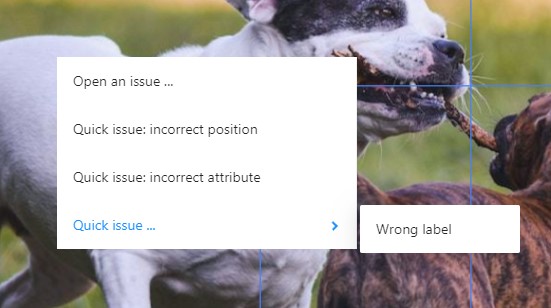

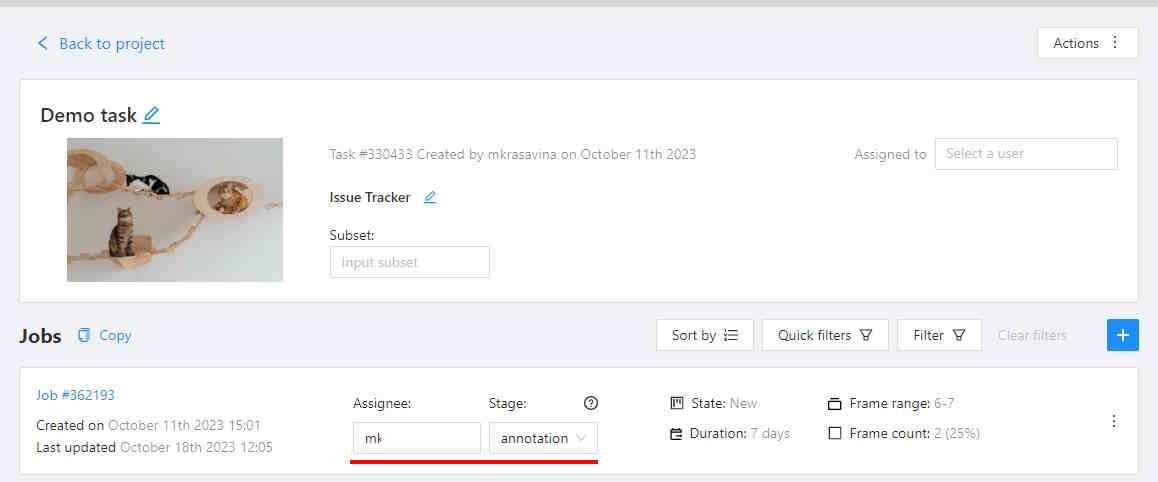

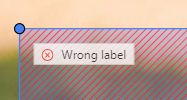

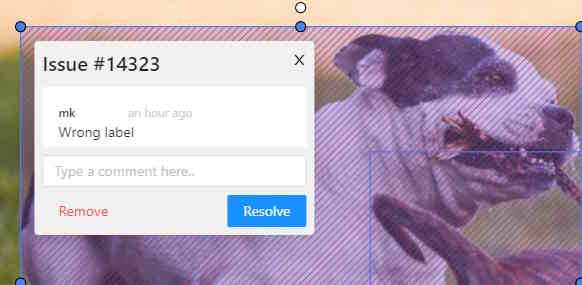

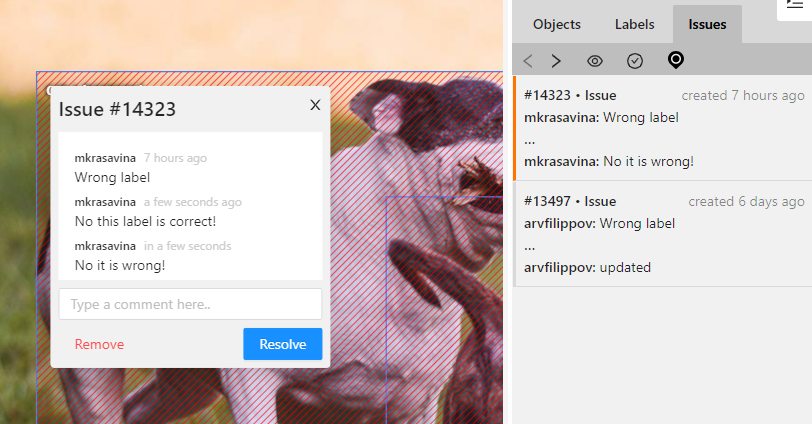

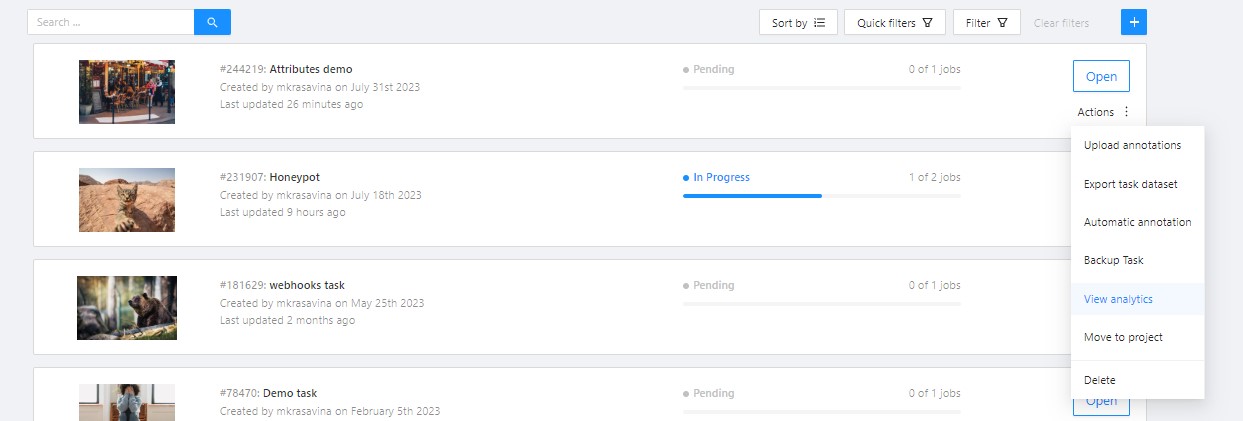

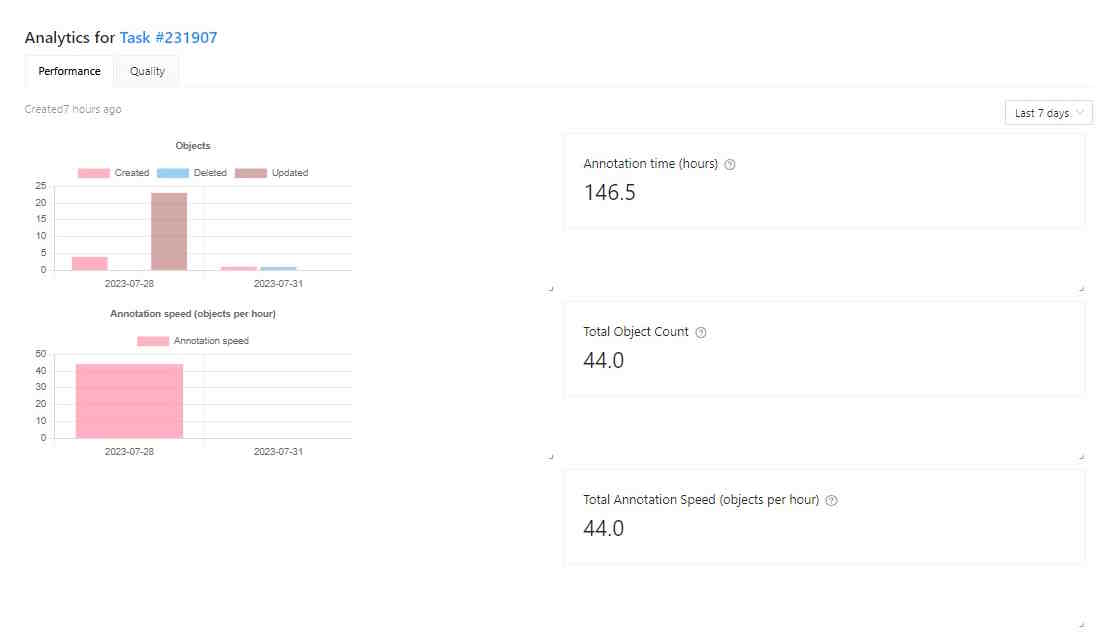

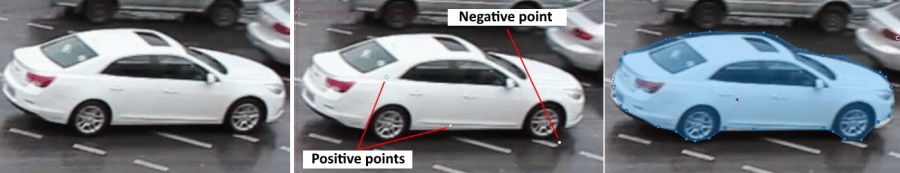

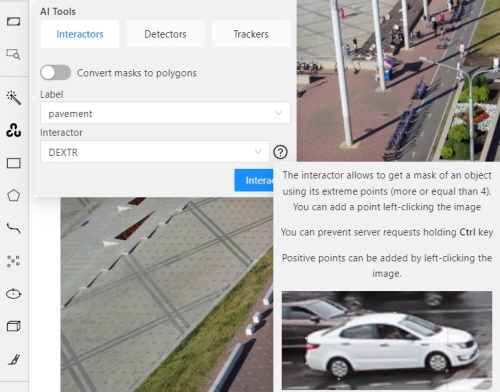

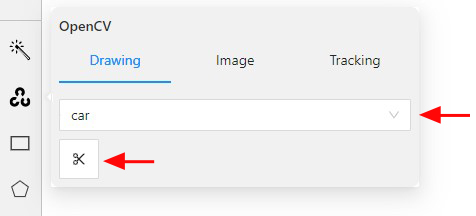

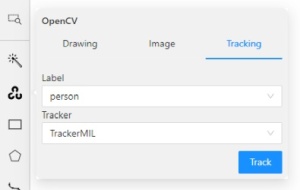

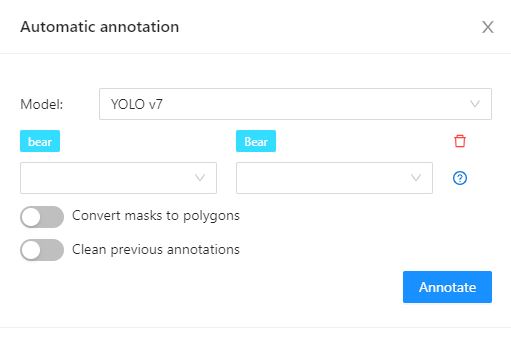

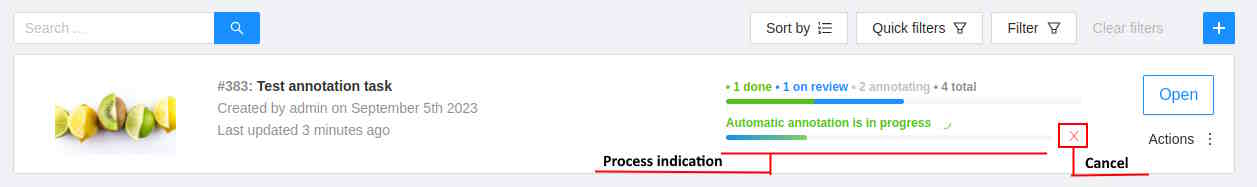

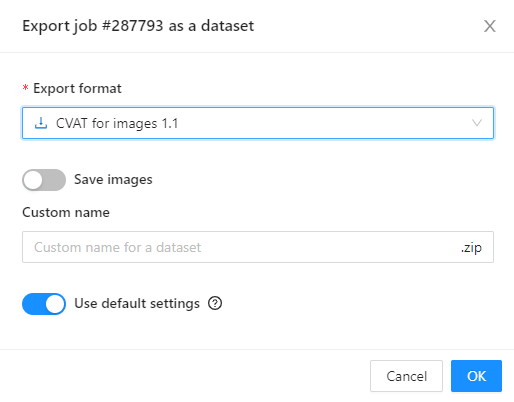

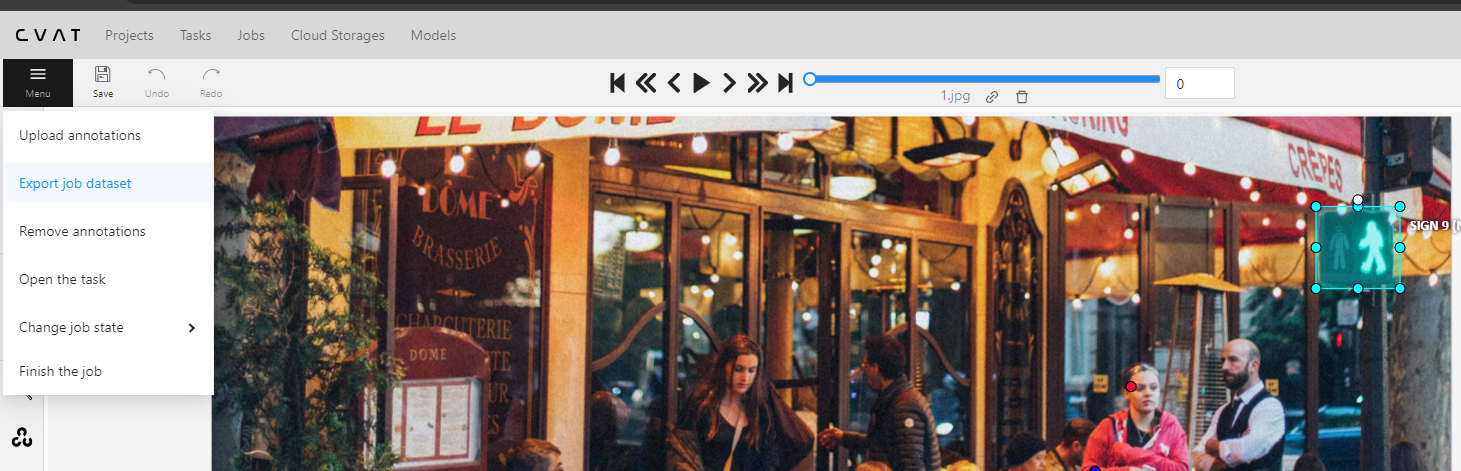

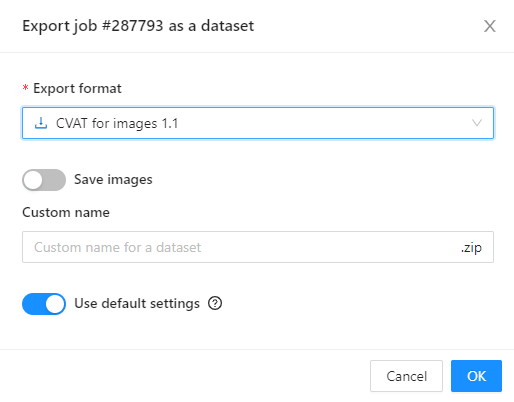

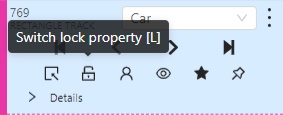

Problem Reporting